👋 Hey there, I’m Dheeraj Choudhary an AI/ML educator, cloud enthusiast, and content creator on a mission to simplify tech for the world.

After years of building on YouTube and LinkedIn, I’ve finally launched TechInsight Neuron a no-fluff, insight-packed newsletter where I break down the latest in AI, Machine Learning, DevOps, and Cloud.

What to expect: actionable tutorials, tool breakdowns, industry trends, and career insights all crafted for engineers, builders, and the curious.

If you're someone who learns by doing and wants to stay ahead in the tech game you're in the right place.

Introduction

Most developers learn Docker by running commands first and understanding the internals later. That works up to a point. But the moment something breaks, or you need to debug a container that won't start, or a pull fails silently, you really want to know what's actually happening under the hood. Docker's architecture isn't complicated once you see it as a set of distinct, cooperating components rather than one monolithic black box.

This guide breaks down each piece: the Docker Client, the Docker Daemon (dockerd), Docker images, containers, and the registry. More importantly, it explains how they talk to each other and why each one exists. By the time you finish, commands like docker run and docker build won't feel like magic anymore.

The Big Picture: Docker's Client-Server Architecture

Docker is built on a client-server model. There are three main components, and each one has a very specific job:

Docker Client is the CLI you type commands into. It doesn't do any heavy lifting itself. It just translates your commands into API requests and sends them to the daemon.

Docker Daemon (dockerd) is the background service that actually runs on your machine. It receives those API requests, manages images, creates containers, handles networks and volumes, and communicates with the container runtime to make things happen at the kernel level.

Docker Registry is where images live. Docker Hub is the default public one. When the daemon needs an image it doesn't have locally, it fetches it from the registry.

A good analogy for how these three interact: think of a restaurant. You are the customer. The Docker Client is the waiter who takes your order. The Docker Daemon is the kitchen that actually prepares the food. The registry is the warehouse where ingredients are stored. You never talk to the kitchen directly. You tell the waiter, and the kitchen handles the rest.

Docker Images: The Blueprint

A Docker image is a read-only, layered template that contains everything needed to run an application: the OS base, runtime, libraries, application code, environment variables, and configuration. You don't run images directly. You use them to create containers.

The layered structure is one of the most important things to understand about images. Every instruction in a

Dockerfileadds a new immutable layer on top of the previous one.

Consider a simple example:

FROM ubuntu:22.04 # Layer 1: base Ubuntu OS

RUN apt-get install nodejs # Layer 2: Node.js runtime

COPY . /app # Layer 3: your application code

CMD ["node", "/app/index.js"] # Layer 4: startup commandEach of those steps produces a layer. Those layers are cached. If you rebuild the image and only your application code changes, Docker reuses layers 1 and 2 from cache and only rebuilds layers 3 and 4. This is why Docker builds are fast after the first time.

The layering also enables sharing. If ten different images all use the same

ubuntu:22.04base layer, that layer is stored exactly once on disk. Each image just references it. This is what keeps Docker storage efficient even when you have dozens of images locally.Images are immutable. Once built, a specific image layer never changes. When you want a new version of your application, you build a new image. The old one stays untouched.

Images are identified by name and tag, like

nginx:1.25orpostgres:16-alpine. The tag is the version. If you omit the tag, Docker defaults tolatest, which is convenient but can cause subtle versioning issues in production. Pinning an exact tag is always the safer practice.

Docker Containers: The Running Instance

A container is what you get when you take a Docker image and actually run it. The daemon adds a thin writable layer on top of the image's read-only layers, starts an isolated process, and that process becomes your running container.

Every container gets its own isolated environment:

Its own filesystem, built from the image layers plus the writable layer on top

Its own process tree, so processes inside the container can't see processes on the host

Its own network stack, with its own IP address and network interfaces

Its own hostname

All of this isolation is provided by Linux kernel features: namespaces handle the visibility isolation (process tree, network, filesystem), and control groups (cgroups) handle resource limits (how much CPU, memory, and disk I/O the container is allowed to use).

One image can spawn as many containers as you want. They all share the same read-only image layers at the bottom, but each container has its own independent writable layer on top. Changes made inside one container are completely invisible to other containers running from the same image.

The writable layer is also ephemeral. When you delete a container, that layer is gone. If you wrote files inside the container during its lifetime, those files disappear too. This is why Docker volumes exist: to give containers a way to persist data that survives beyond the container's lifecycle.

The pizza analogy from the slides is accurate: the image is the recipe, and the container is the actual pizza. You can make as many pizzas as you want from one recipe. Each pizza is distinct once it's been made.

Image vs Container: The Key Differences

This distinction trips up a lot of developers early on, so it's worth being explicit about it.

Docker Image | Docker Container | |

|---|---|---|

What it is | Blueprint / template | Running instance |

State | Read-only, static | Has a writable layer, dynamic |

Lives where | Stored on disk | Runs in memory |

Runs processes? | No | Yes |

Reusability | Shared across many containers | Independent, isolated |

Lifecycle | Created once, versioned | Started, stopped, deleted |

The practical implication: you build images once (or pull them from a registry), and you create containers from them on demand. When a container finishes its job or crashes, you don't rebuild the image. You just spin up a new container from the same image.

The Docker Daemon (dockerd): The Engine Room

The Docker Daemon, referred to as dockerd, is the persistent background process that makes everything work. It runs on the Docker host and listens for API requests, typically over a Unix socket at /var/run/docker.sock on Linux.

When you type any docker command, you're not directly doing anything to containers or images. You're sending an HTTP request to the daemon. The daemon receives it, figures out what needs to happen, and goes to work.

The daemon is responsible for:

Building images from Dockerfiles, layer by layer

Creating and running containers by delegating to the container runtime

Managing the full container lifecycle: starting, stopping, restarting, and deleting containers

Handling networks: creating bridge networks, assigning IP addresses, managing DNS between containers

Managing volumes: creating and attaching persistent storage to containers

Communicating with registries: pulling images down and pushing images up

The daemon can also communicate with other daemons, which is how Docker Swarm works when you need to coordinate containers across multiple machines.

One important nuance: the daemon doesn't do the lowest-level container creation work itself. It delegates that to containerd, which in turn uses runc. Understanding this separation helps when you read Docker logs or encounter errors from these sub-components.

Under the Daemon: containerd and runc

The PDF describes the daemon as doing "the actual work," which is accurate at a high level. But there's a layer worth knowing about beneath dockerd, because it explains a lot about how Docker behaves and why it's designed the way it is.

When dockerd needs to create a container, it doesn't directly call the Linux kernel. It delegates to containerd, a separate background daemon that manages the actual container lifecycle. containerd handles pulling and storing images, creating container filesystem snapshots, and managing the running container processes.

When containerd needs to actually create a new container process, it calls runc, which is a lightweight command-line tool that directly interfaces with the Linux kernel. runc uses the

clone()syscall to create a new process with isolated namespaces, applies cgroup limits, mounts the container filesystem using OverlayFS, and starts the container's entry process.

The full chain looks like this:

Docker CLI → dockerd → containerd → runc → Linux kernel (namespaces + cgroups)There's also a component called containerd-shim that sits between containerd and each running container. Its job is to keep the container running even if containerd or dockerd crashes or restarts. It also manages the container's stdin/stdout streams and reports exit status back to the daemon.

Why does this layered design exist? Before 2016, Docker was a single monolithic binary doing everything. The split into dockerd, containerd, and runc happened as Docker and the industry moved toward standardization through the Open Container Initiative (OCI). runc is the reference implementation of the OCI Runtime Specification, meaning any OCI-compliant runtime can be swapped in. This is how alternative runtimes like Kata Containers (which runs containers inside lightweight VMs for stronger isolation) can plug into the same Docker workflow.

The Docker Client: Your Interface to All of It

The Docker Client (the docker CLI) is simpler than it might seem. It's fundamentally a REST API client. Every command you type gets translated into an HTTP request sent to the daemon's API.

docker run nginx

# Becomes: POST /containers/create + POST /containers/{id}/start

# Sent to: http+unix:///var/run/docker.sockThe client can connect to a local daemon (via the Unix socket) or a remote daemon (via TCP). Docker Desktop on Mac and Windows runs the daemon inside a lightweight Linux VM and configures the client to connect to it transparently.

The most commonly used client commands, and what they actually do:

# Creates a container from an image and starts it

docker run <image>

# Builds an image from a Dockerfile in the current directory

docker build -t myapp:1.0 .

# Downloads an image from the registry to local cache

docker pull nginx:1.25

# Lists currently running containers

docker ps

# Lists all containers including stopped ones

docker ps -a

# Uploads a local image to a registry

docker push myusername/myapp:1.0

# Shows logs from a running or stopped container

docker logs <container_id>

# Opens a shell inside a running container

docker exec -it <container_id> bashThe client is also what Docker Compose uses internally. When you run docker compose up, Compose reads your docker-compose.yml, figures out what containers to create, and makes the same API calls to dockerd that the CLI would make directly.

Docker Registry and Docker Hub

A Docker registry is a storage and distribution service for Docker images. Think of it as a package repository, but for container images instead of code libraries.

Docker Hub (hub.docker.com) is the default public registry. When you run docker pull nginx without specifying a registry URL, Docker looks for the nginx image on Docker Hub. Docker Hub hosts two categories of images:

Official images are maintained directly by the software vendors or by Docker in partnership with them. Images like nginx, postgres, redis, python, node, and ubuntu are official images. They're regularly updated, follow security best practices, and are generally what you should use as base images.

Community images are published by individual users and organizations. They follow the format username/imagename, like bitnami/postgresql. Quality and maintenance vary, so check the pull count, star count, and last update date before relying on a community image in production.

The two main operations with registries:

# Download an image from the registry to your local machine

docker pull postgres:16

# Upload a locally built image to a registry

docker push myusername/myapp:2.0Before you can push, you need to log in:

docker login

# Prompts for Docker Hub username and passwordFor private images in production, most teams use a managed private registry rather than Docker Hub's paid private repositories. Options include AWS Elastic Container Registry (ECR), Google Artifact Registry, Azure Container Registry, and the self-hosted open-source Harbor. These integrate with cloud IAM systems and often scan images for vulnerabilities automatically.

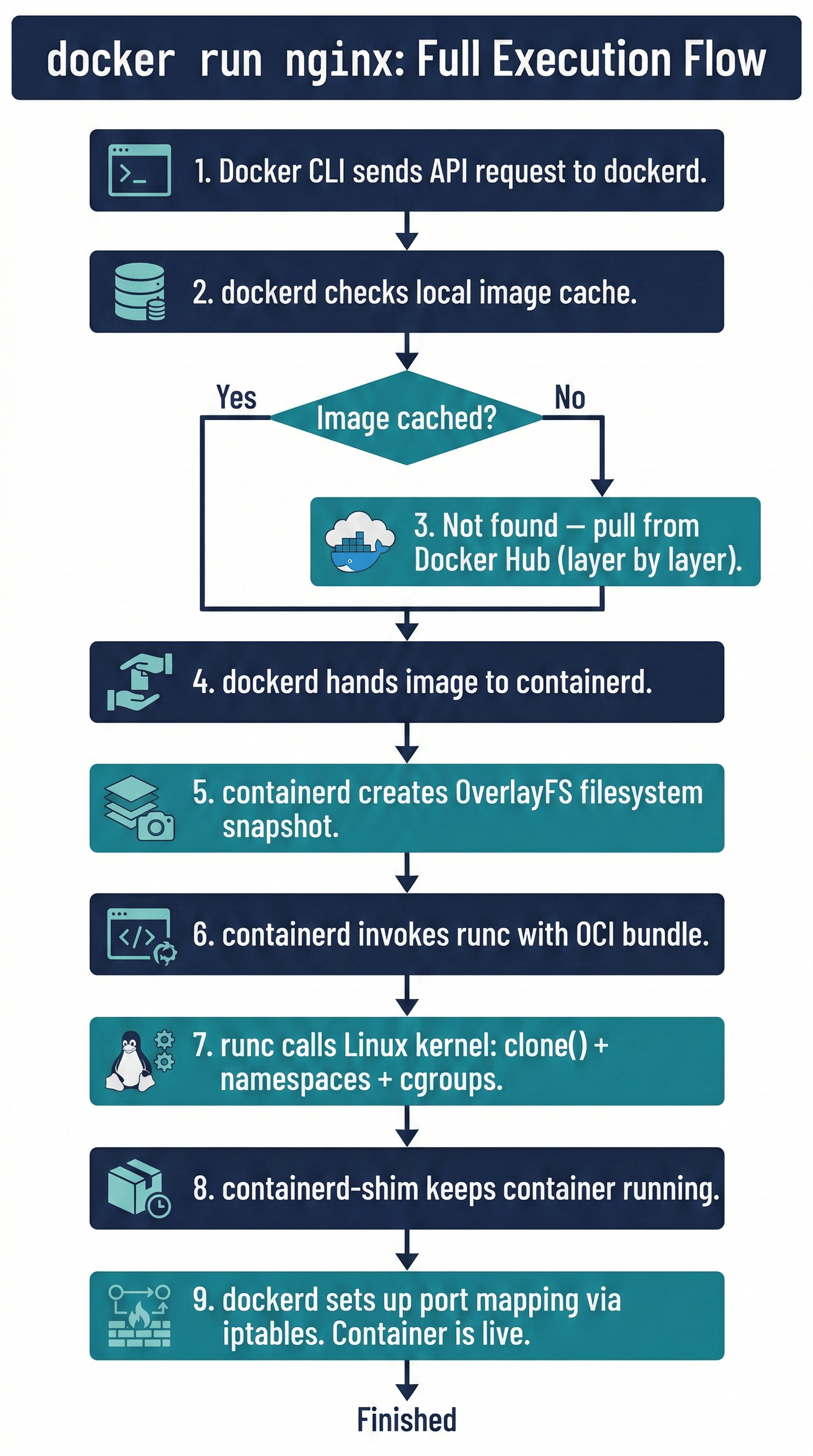

Putting It All Together: What Happens When You Run docker run

Let's trace a single docker run nginx command through the entire architecture from start to finish.

bash

docker run -d -p 8080:80 nginxHere is the full sequence:

Step 1: Client to Daemon. The Docker CLI translates the command into REST API calls and sends them to dockerd over the Unix socket: first a POST /containers/create request, then POST /containers/{id}/start.

Step 2: Image check. dockerd checks the local image cache. Does nginx:latest exist locally? If yes, skip to step 4. If no, continue to step 3.

Step 3: Pull from registry. dockerd contacts Docker Hub and downloads the nginx image, layer by layer. Each layer is verified against its SHA256 digest to confirm integrity. The layers are stored in /var/lib/docker.

Step 4: Hand off to containerd. dockerd passes the image reference and container configuration (port mapping, environment variables, etc.) to containerd.

Step 5: Filesystem setup. containerd creates a snapshot of the image layers using OverlayFS. It stacks the read-only image layers and adds a fresh writable layer on top. This becomes the container's root filesystem.

Step 6: runc creates the container. containerd invokes runc, passing it an OCI-compliant bundle (a directory containing config.json and the root filesystem). runc calls the Linux kernel's clone() syscall to create a new process with isolated namespaces (PID, network, mount, UTS, IPC). cgroups are applied to limit resource usage.

Step 7: containerd-shim takes over. runc starts the container process and then exits. The containerd-shim process stays attached to the container, keeping it running independently of the daemon.

Step 8: Network setup. dockerd wires up the port mapping: traffic arriving at port 8080 on the host gets forwarded to port 80 inside the container, via iptables rules created by Docker's networking subsystem.

Step 9: Running. nginx is now running in an isolated container. docker ps will show it. docker logs <id> will show its output.

Key Takeaways

Docker uses a client-server architecture: the CLI sends API requests to the Docker Daemon (dockerd), which manages everything else

Docker images are read-only, layered, immutable templates. They are the blueprint. Containers are the live, running instances created from those blueprints

One image can create any number of containers, each with its own independent writable layer and isolated environment

The Docker Daemon (dockerd) doesn't do the lowest-level container work alone. It delegates to containerd, which uses runc to interface directly with the Linux kernel via namespaces and cgroups

containerd-shim keeps containers running independently, so a daemon restart doesn't kill your running containers

The Docker Client can connect to local or remote daemons, making remote container management possible with the same familiar CLI

Docker Hub is the default public registry with official and community images. For private images in production, managed registries like ECR, GCR, or Harbor are standard choices

docker pulldownloads images from a registry to local cache.docker pushuploads locally built images back to a registryWhen you run

docker run, you're triggering a chain that goes: CLI → dockerd → containerd → runc → Linux kernel

Conclusion

Docker's architecture is modular by design. Each component has a single, clear responsibility: the client translates commands, the daemon orchestrates operations, containerd manages container lifecycle, runc talks to the kernel, and registries store and distribute images. Nothing does more than its job.

Understanding these layers pays off when things go wrong. A container that exits immediately isn't a Docker problem, it's likely the process inside the container exiting. An image pull that fails is a registry or network issue, not a daemon bug. Knowing where to look cuts debugging time significantly.

The next step from here is understanding how to build your own images using a Dockerfile, where you get direct control over every layer that goes into the image your containers run from.

🔗Let’s Stay Connected

📱 Join Our WhatsApp Community

Get early access to AI/ML, Cloud & Devops resources, behind-the-scenes updates, and connect with like-minded learners.

➡️ Join the WhatsApp Group

✅ Follow Me for Daily Tech Insights

➡️ LinkedIN

➡️ YouTube

➡️ X (Twitter)

➡️ Website