👋 Hey there, I’m Dheeraj Choudhary an AI/ML educator, cloud enthusiast, and content creator on a mission to simplify tech for the world.

After years of building on YouTube and LinkedIn, I’ve finally launched TechInsight Neuron a no-fluff, insight-packed newsletter where I break down the latest in AI, Machine Learning, DevOps, and Cloud.

What to expect: actionable tutorials, tool breakdowns, industry trends, and career insights all crafted for engineers, builders, and the curious.

If you're someone who learns by doing and wants to stay ahead in the tech game you're in the right place.

Introduction

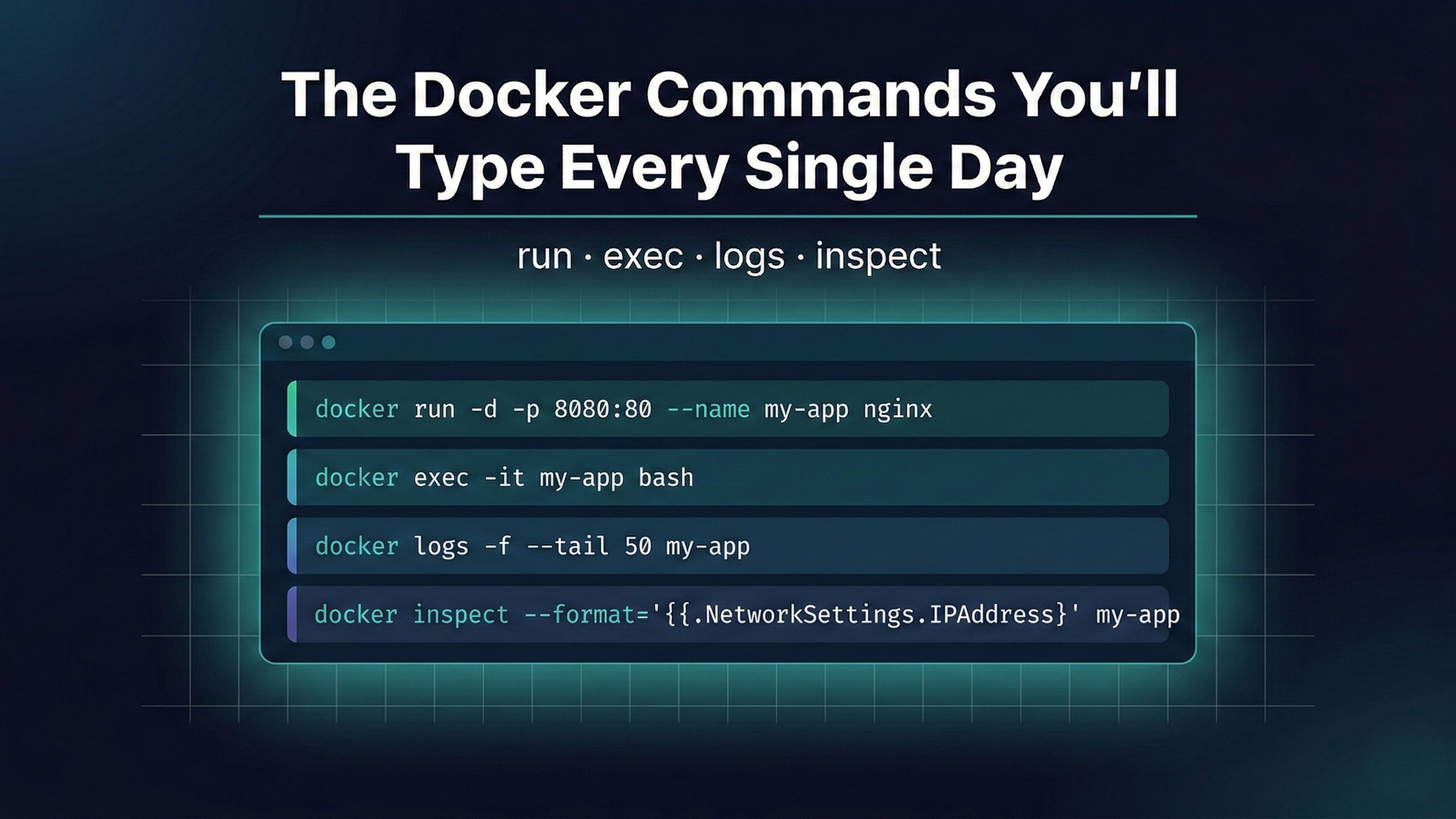

Knowing Docker conceptually is one thing. Actually using it day to day means getting comfortable with a handful of commands you'll type dozens of times a week. Docker's CLI is large, but the truth is most of your daily container work comes down to four commands: docker run, docker exec, docker logs, and docker inspect. Get these right and you can start containers, jump inside them, debug output, and pull out configuration details without ever reaching for a GUI.

This guide goes through each command properly: what it does, why each flag exists, what the output means, and how it fits into a real workflow. There are also a few additions the PDF doesn't cover, including docker ps variations, Alpine shell fallbacks for exec, and log piping patterns that save time when debugging in production.

docker run: Creating and Starting Containers

docker run is the command that does two things at once: it creates a new container from an image and immediately starts it. Most people think of it as just "starting a container," but technically every docker run call creates a brand new container instance. That distinction matters when you look at docker ps -a and wonder why you have twenty stopped containers.

Basic syntax:

docker run [OPTIONS] IMAGE [COMMAND]The simplest possible usage:

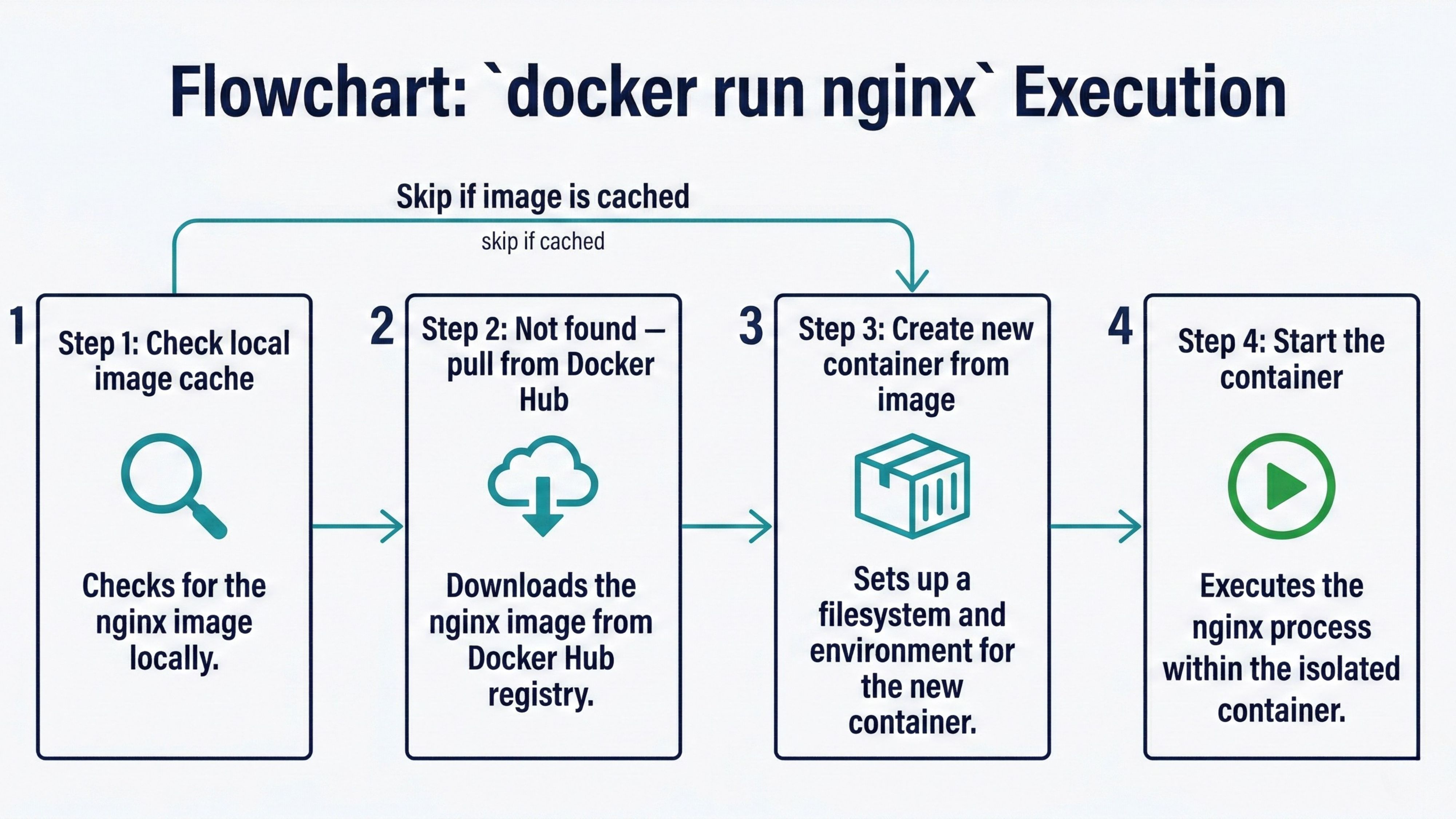

docker run nginxThat one command triggers a four-step sequence:

Docker checks the local image cache for

nginx:latestIf it doesn't exist locally, Docker pulls it from Docker Hub layer by layer

Docker creates a new container from that image

Docker starts the container

The problem with running it exactly like that is your terminal gets locked to the container's output. Hit Ctrl+C and the container stops. That's why in practice you almost always pair docker run with flags.

docker run: The Flags You'll Use Every Day

Options are where docker run gets real power. Here are the ones you'll use constantly, with an explanation of why each one exists.

-d (Detached mode)

docker run -d nginxRuns the container in the background and prints the container ID. Your terminal returns immediately. This is how you run long-lived services like web servers and databases. Without -d, your terminal is attached to the container's stdout until the container stops.

-p (Port mapping)

docker run -p 8080:80 nginxThe format is host_port:container_port. This tells Docker to forward traffic arriving at port 8080 on your machine into port 80 inside the container. Without this, the container's ports are isolated and unreachable from outside. You can map any host port to any container port, and map multiple ports at once:

docker run -p 8080:80 -p 8443:443 nginx--name (Custom container name)

docker run --name my-nginx nginxWithout --name, Docker generates a random two-word name like vibrant_cannon or sleepy_turing. Naming your containers makes every subsequent command cleaner since you reference containers by name rather than by their truncated ID. Names must be unique across all containers on the host, including stopped ones.

-it (Interactive terminal)

docker run -it ubuntu bash-i keeps stdin open so you can type into the container. -t allocates a pseudo-TTY, giving you a proper terminal with line editing and formatting. Together they give you an interactive shell session inside the container. Type exit to leave and stop the container.

For minimal images like Alpine that don't have bash, use sh instead:

docker run -it alpine sh-e (Environment variables)

docker run -e DB_HOST=localhost -e DB_PORT=5432 myappPasses environment variables into the container at startup. This is the standard way to inject configuration into containerized applications, keeping secrets and settings out of the image itself.

--rm (Auto-remove on exit)

docker run --rm ubuntu echo "hello"Automatically deletes the container when it exits. Useful for one-off commands and short-lived tasks where you don't want stopped containers accumulating in docker ps -a.

Combining flags

In practice you combine several flags in one command:

docker run -d -p 8080:80 --name web-server nginxThis runs nginx detached, maps port 8080 to port 80, and names the container web-server. That single command is what you'll type (or have in a script) when spinning up a development server.

docker run -d \

--name postgres-dev \

-e POSTGRES_PASSWORD=mypassword \

-e POSTGRES_DB=appdb \

-p 5432:5432 \

postgres:16docker ps: Seeing What's Running

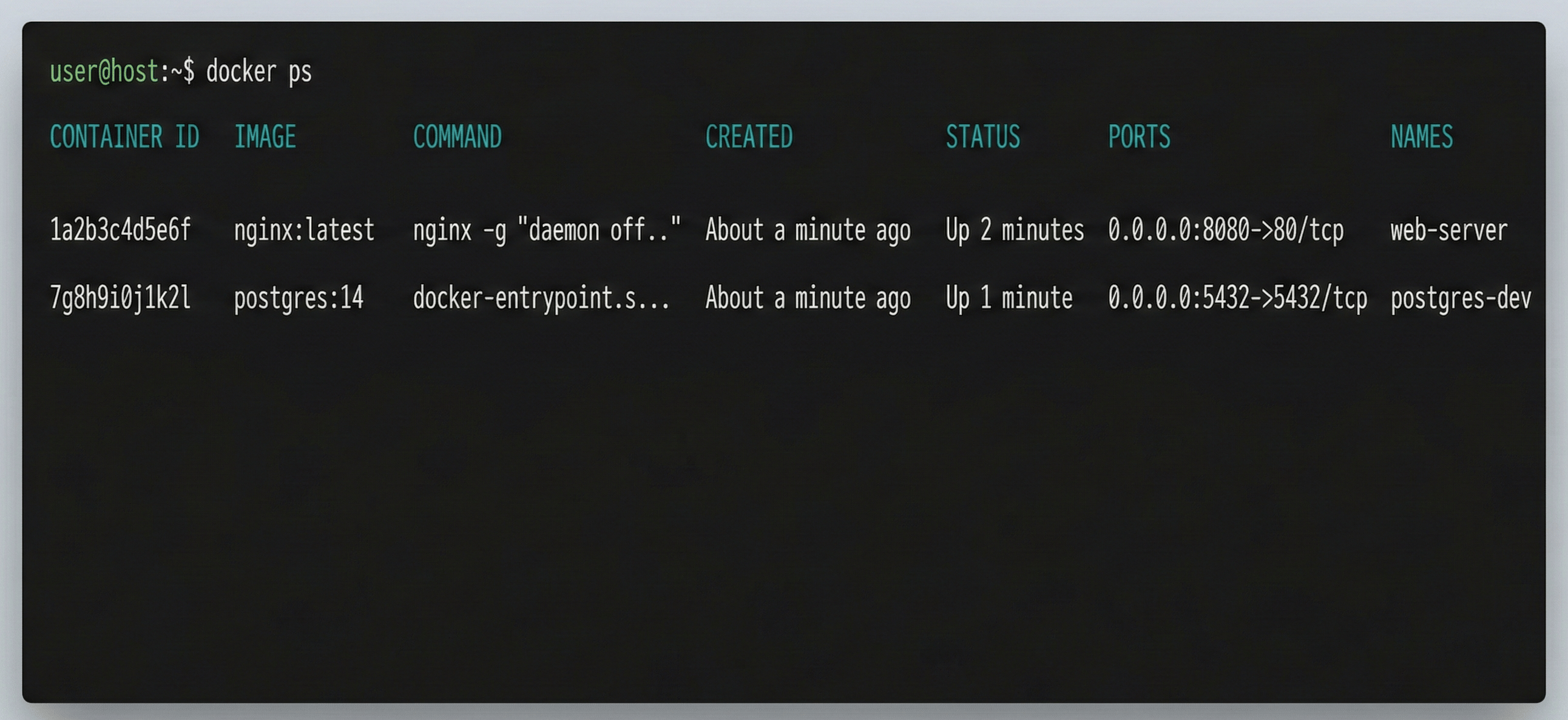

Before jumping into exec or logs, you need to know what containers exist and what state they're in. docker ps is your primary tool for that.

# Show only running containers

docker ps

# Show all containers, including stopped ones

docker ps -aThe output columns are: CONTAINER ID, IMAGE, COMMAND, CREATED, STATUS, PORTS, and NAMES. The STATUS column is the one to watch. Running containers show Up X minutes. Stopped containers show Exited (0) X minutes ago (exit code 0 means clean exit) or Exited (1) for a crash.

Useful variations:

# Show only container IDs (useful for scripting)

docker ps -q

# Show only stopped containers

docker ps --filter "status=exited"

# Show containers from a specific image

docker ps --filter "ancestor=nginx"

# Show the last N created containers regardless of status

docker ps -n 5The -q flag is particularly useful when you want to perform bulk operations:

# Stop all running containers

docker stop $(docker ps -q)

# Remove all stopped containers

docker rm $(docker ps -aq --filter "status=exited")

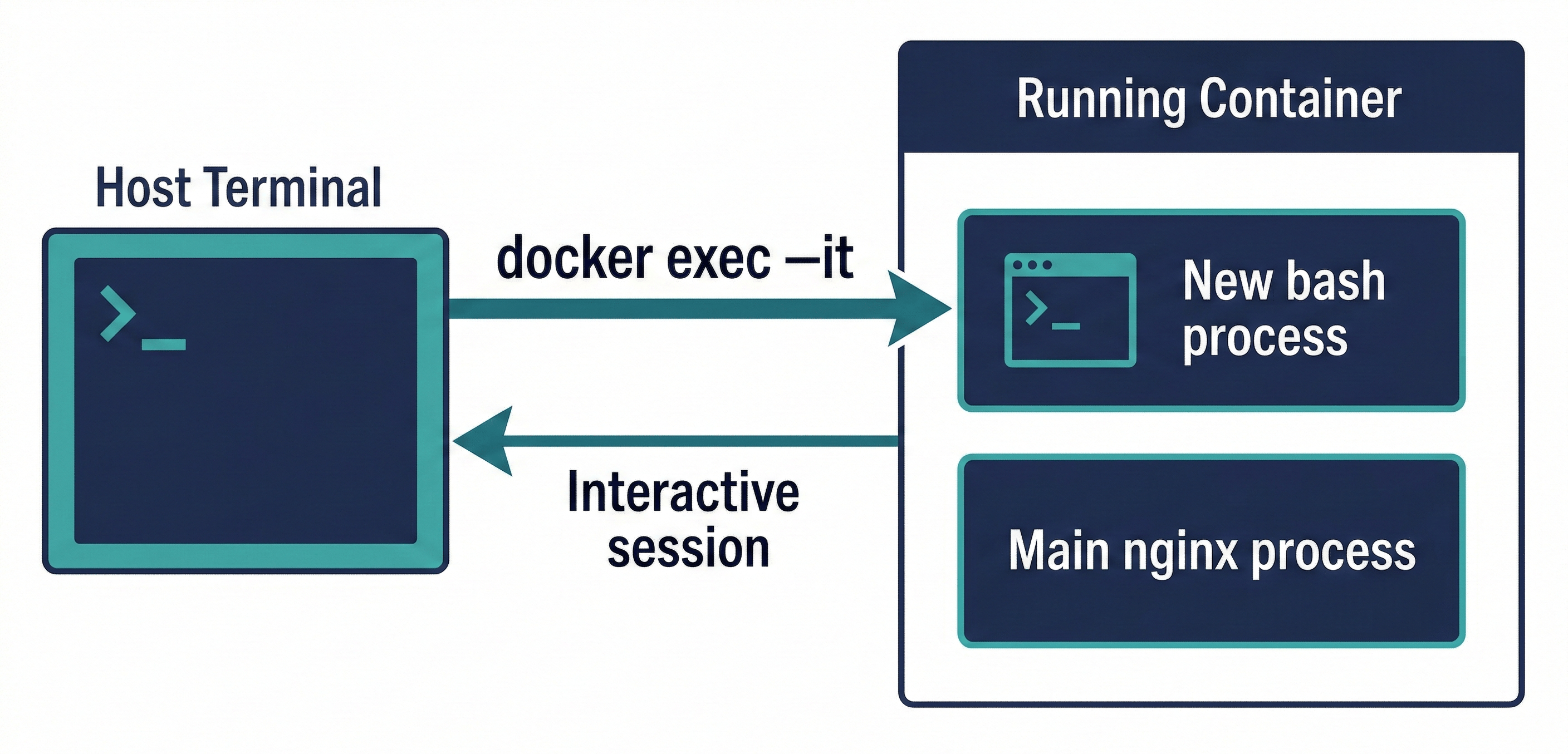

docker exec: Getting Inside a Running Container

docker exec runs a new process inside an already-running container. It's how you inspect the container's filesystem, check configuration files, run one-off scripts, or open an interactive shell for debugging. The key word is "running": exec only works on containers in the running state. If the container is stopped, you'll get an error.

Basic syntax:

docker exec [OPTIONS] CONTAINER COMMANDInteractive shell

The most common use is dropping into a bash shell:

docker exec -it my-nginx bash-i keeps stdin open and -t allocates a pseudo-TTY, same as with docker run -it. From inside this shell you can explore the filesystem, check config files, look at running processes, and test network connectivity. All as if you're logged into a tiny Linux machine.

If the container image doesn't have bash (common with Alpine-based minimal images), fall back to sh:

docker exec -it my-nginx shRunning one-off commands

You don't always need a full interactive session. Sometimes you just want to run a single command and see the output:

# List files in the nginx web directory

docker exec my-nginx ls /usr/share/nginx/html

# Print the nginx configuration

docker exec my-nginx cat /etc/nginx/nginx.conf

# Check running processes inside the container

docker exec my-nginx ps aux

# Check environment variables inside the container

docker exec my-nginx envRunning as a specific user

# Run as root inside the container

docker exec -u root my-nginx bash

# Run as a specific user by name

docker exec -u nginx my-nginx bashRunning in the background

# Start a long-running process inside a container without blocking your terminal

docker exec -d my-nginx /usr/local/bin/maintenance-script.shThe -d flag here is --detach for exec, not the same as docker run -d. It detaches the new process from your terminal while the container keeps running.

A critical point: any changes you make inside a container via exec live only in that container's writable layer. They don't modify the image. When the container is removed, those changes are gone. If you need changes to persist, they belong in the Dockerfile.

docker logs: Reading Container Output

Containers write their output to stdout and stderr. Docker captures both streams and stores them so you can read them at any time with docker logs. This is your primary debugging tool when a container misbehaves.

docker logs my-nginxThat prints all captured output from the container since it started. For an active web server that's been running for hours, that can be thousands of lines. The flags let you be more precise.

Following logs in real-time

docker logs -f my-nginxThe -f flag (follow) streams new log output as it arrives, exactly like tail -f on a log file. Press Ctrl+C to stop following. The container keeps running.

Limiting output

# Show only the last 50 lines

docker logs --tail 50 my-nginx

# Combine follow with tail for the most useful debugging pattern

docker logs -f --tail 100 my-nginxThe combination of -f and --tail is the most practical pattern. You get recent context plus live streaming, without scrolling through thousands of historical lines.

Filtering by time

# Logs from the last 10 minutes

docker logs --since 10m my-nginx

# Logs from the last hour

docker logs --since 1h my-nginx

# Logs between two specific timestamps

docker logs --since 2024-01-15T10:00:00 --until 2024-01-15T11:00:00 my-nginxAdding timestamps

docker logs -t my-nginxAdds RFC3339Nano timestamps to each log line. Useful when you're correlating container logs with external events.

Piping logs for searching

docker logs output can be piped to standard shell tools:

# Search for errors

docker logs my-nginx 2>&1 | grep -i error

# Count 404 occurrences

docker logs my-nginx 2>&1 | grep -c "404"

# Save logs to a file

docker logs my-nginx > app.log 2>&1The 2>&1 redirects stderr to stdout so both streams get captured in the pipe or file.

One important limitation: docker logs only works with the default json-file and local logging drivers. If your container is configured to use an external logging driver like fluentd, awslogs, or gelf, the output goes directly to that system and docker logs returns nothing. That's a common surprise when moving from development (json-file) to production (centralized logging).

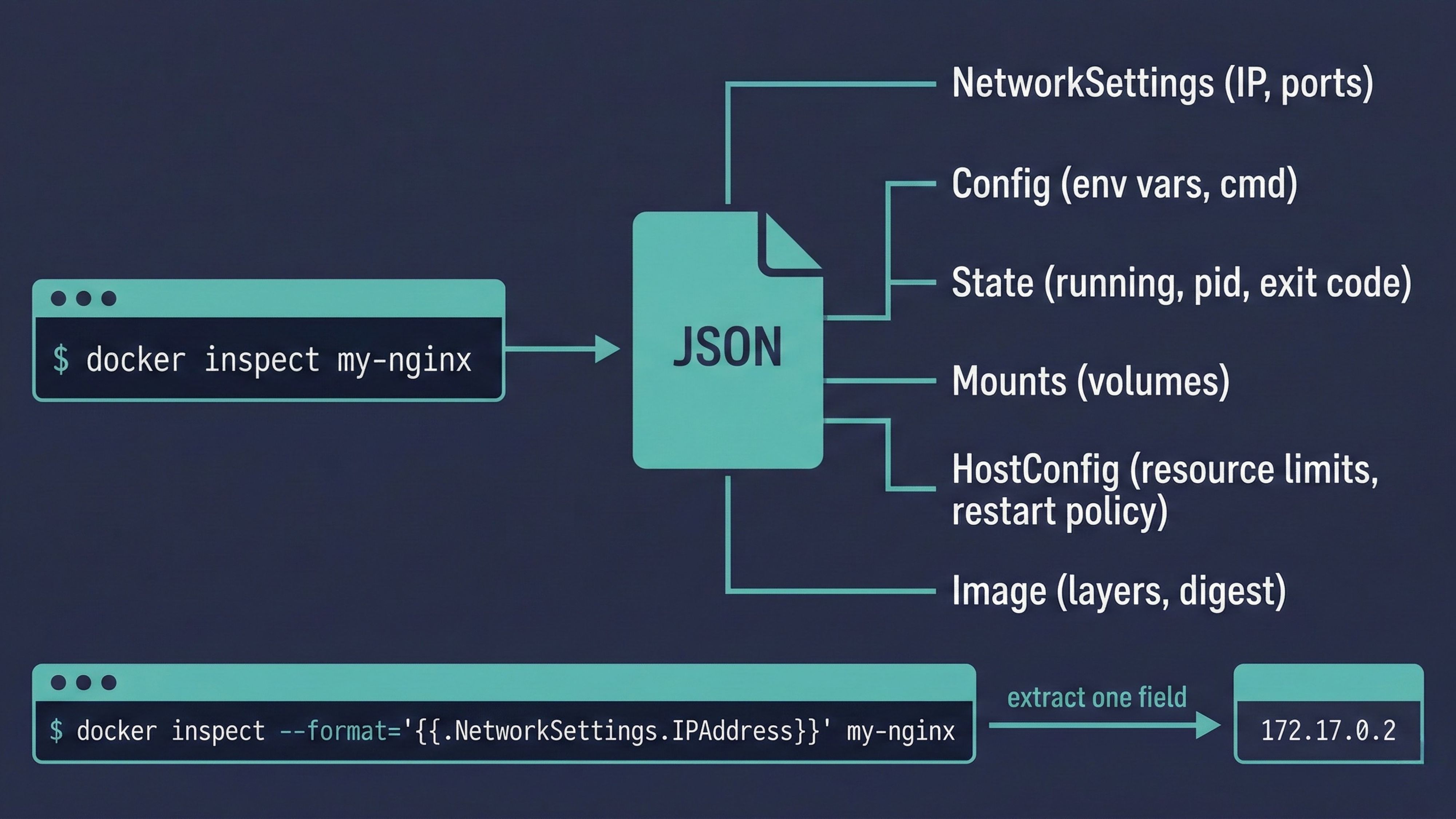

docker inspect: The Full Picture

docker inspect returns a large JSON object containing every piece of configuration and state information about a container or image. It's not a command you run constantly, but when you need to know something specific about how a container is configured, it's the definitive source.

docker inspect my-nginxThe output is hundreds of lines of JSON covering everything: network configuration, volume mounts, environment variables, resource limits, image details, container state, restart policy, the full command that was used to start the container, and more.

Extracting specific fields with --format

Reading through hundreds of lines of JSON is tedious. The --format flag lets you extract exactly the field you want using Go template syntax:

# Get the container's IP address

docker inspect --format='{{.NetworkSettings.IPAddress}}' my-nginx

# Get the container's current state

docker inspect --format='{{.State.Status}}' my-nginx

# Get the process ID of the container on the host

docker inspect --format='{{.State.Pid}}' my-nginx

# Get all environment variables

docker inspect --format='{{.Config.Env}}' my-nginx

# Get the restart policy

docker inspect --format='{{.HostConfig.RestartPolicy.Name}}' my-nginx

# Get volume mounts

docker inspect --format='{{json .Mounts}}' my-nginxThe dot notation follows the JSON structure. If you're not sure of the exact field path, run docker inspect without --format first, find the field you need in the JSON output, then construct the template from that path.

Inspecting images

docker inspect works on images too, not just containers:

docker inspect nginx:latestThis returns the image's layers, configuration, exposed ports, default environment variables, and the entrypoint and command that containers will run by default.

Inspecting multiple objects at once

docker inspect container1 container2 container3Returns a JSON array with one entry per object. Useful for comparing configurations across containers.

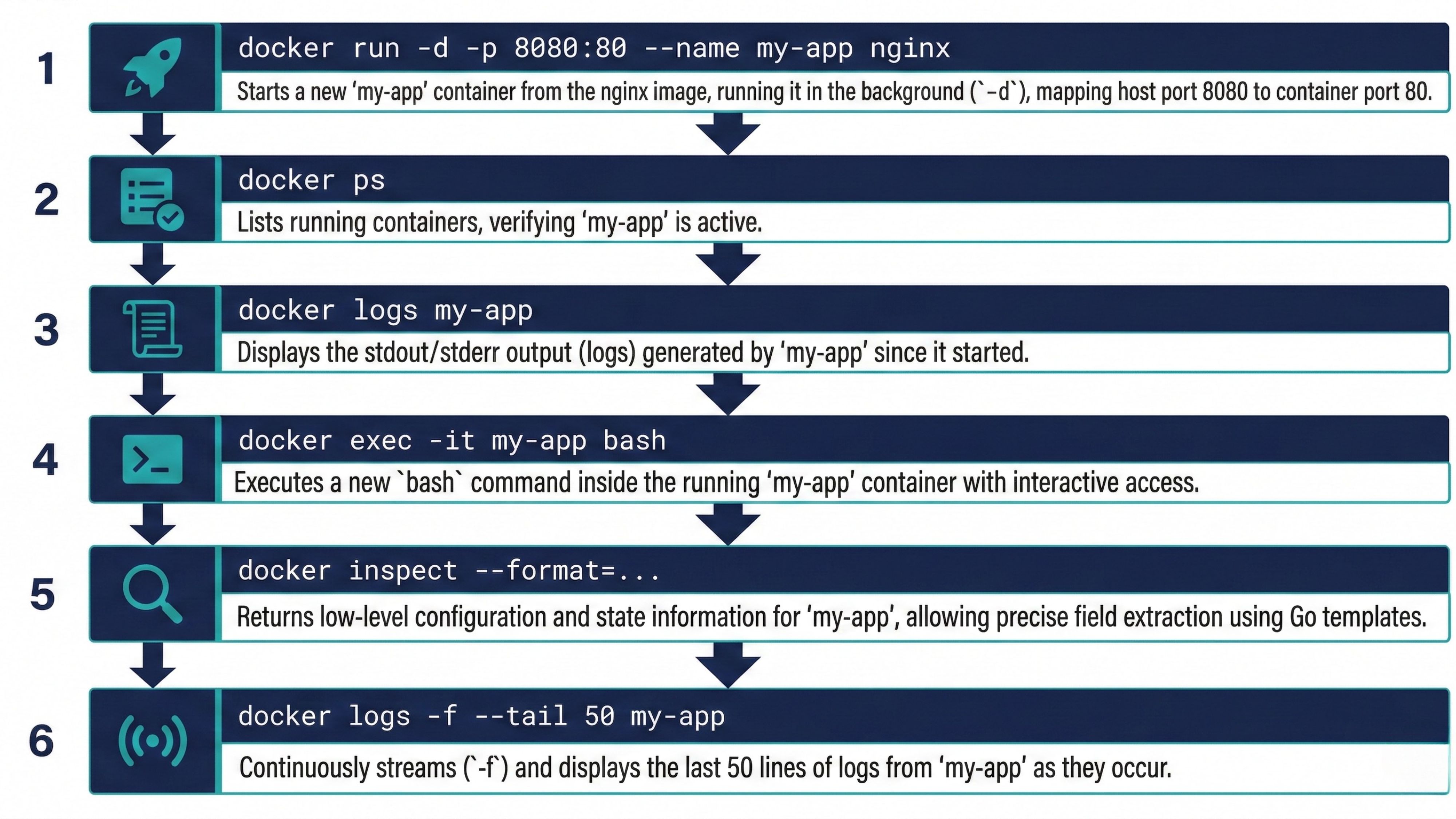

A Practical Daily Workflow

These four commands don't exist in isolation. Here's how they chain together in the kind of workflow you'll actually use when running and debugging containerized applications.

# Step 1: Start a container in the background with a name and port mapping

docker run -d -p 8080:80 --name my-app nginx

# Step 2: Confirm it's running

docker ps

# Step 3: Check the initial logs to see if it started cleanly

docker logs my-app

# Step 4: Jump inside to inspect config or filesystem

docker exec -it my-app bash

# Step 5: Get the container's IP and network details

docker inspect --format='{{.NetworkSettings.IPAddress}}' my-app

# Step 6: Watch live log output while testing

docker logs -f --tail 50 my-appThis sequence covers the full lifecycle of working with a running container. Start it, verify it, check its output, get inside it, pull out configuration details, monitor it live.

When something goes wrong, the debugging path usually goes in this order: docker ps to confirm the container is actually running, docker logs to see what the application output says, docker exec to get inside and poke around if logs aren't enough, and docker inspect when you suspect a configuration issue like a wrong environment variable, a missing volume mount, or a network misconfiguration.

Key Takeaways

docker runcreates and starts a new container from an image. Every call creates a fresh container instance-druns the container in the background.-pmaps host ports to container ports.--namegives the container a stable identifier.-itopens an interactive terminal.-epasses environment variablesdocker psshows running containers.docker ps -ashows all containers including stopped ones.docker ps -qreturns only IDs, which is useful for bulk operationsdocker execruns a new process inside a running container. The container must be running. Use-itfor interactive shells, no flags for one-off commandsOn Alpine-based images, use

shinstead ofbashsince bash is not installed by defaultdocker logsreads a container's stdout and stderr.-ffollows in real-time.--tail Nlimits to the last N lines.--sincefilters by time. Pipe togrepfor searchingdocker logsonly works withjson-fileandlocallogging drivers. External drivers likeawslogsbypass it entirelydocker inspectreturns full JSON configuration and state for a container or image. Use--formatwith Go template syntax to extract specific fields without parsing the entire JSON outputThe standard debugging workflow is:

psto confirm state,logsto check output,execto investigate from inside,inspectto verify configuration

Conclusion

These four commands cover the majority of what you'll do with Docker containers day to day. They're not complicated individually, but knowing the right flags and when to combine them makes a real difference in how quickly you can start containers, investigate problems, and pull out the information you need.

The pattern to internalize is this: docker run to create, docker ps to observe, docker logs to read output, docker exec to interact, docker inspect to interrogate. Once those five commands feel natural, the rest of Docker's CLI follows the same logical structure and becomes easy to pick up.

The next natural step after mastering these is learning docker build and Dockerfiles, where you define the images that docker run uses to create containers in the first place.

🔗Let’s Stay Connected

📱 Join Our WhatsApp Community

Get early access to AI/ML, Cloud & Devops resources, behind-the-scenes updates, and connect with like-minded learners.

➡️ Join the WhatsApp Group

✅ Follow Me for Daily Tech Insights

➡️ LinkedIN

➡️ YouTube

➡️ X (Twitter)

➡️ Website