👋 Hey there, I’m Dheeraj Choudhary an AI/ML educator, cloud enthusiast, and content creator on a mission to simplify tech for the world.

After years of building on YouTube and LinkedIn, I’ve finally launched TechInsight Neuron a no-fluff, insight-packed newsletter where I break down the latest in AI, Machine Learning, DevOps, and Cloud.

What to expect: actionable tutorials, tool breakdowns, industry trends, and career insights all crafted for engineers, builders, and the curious.

If you're someone who learns by doing and wants to stay ahead in the tech game you're in the right place.

Introduction

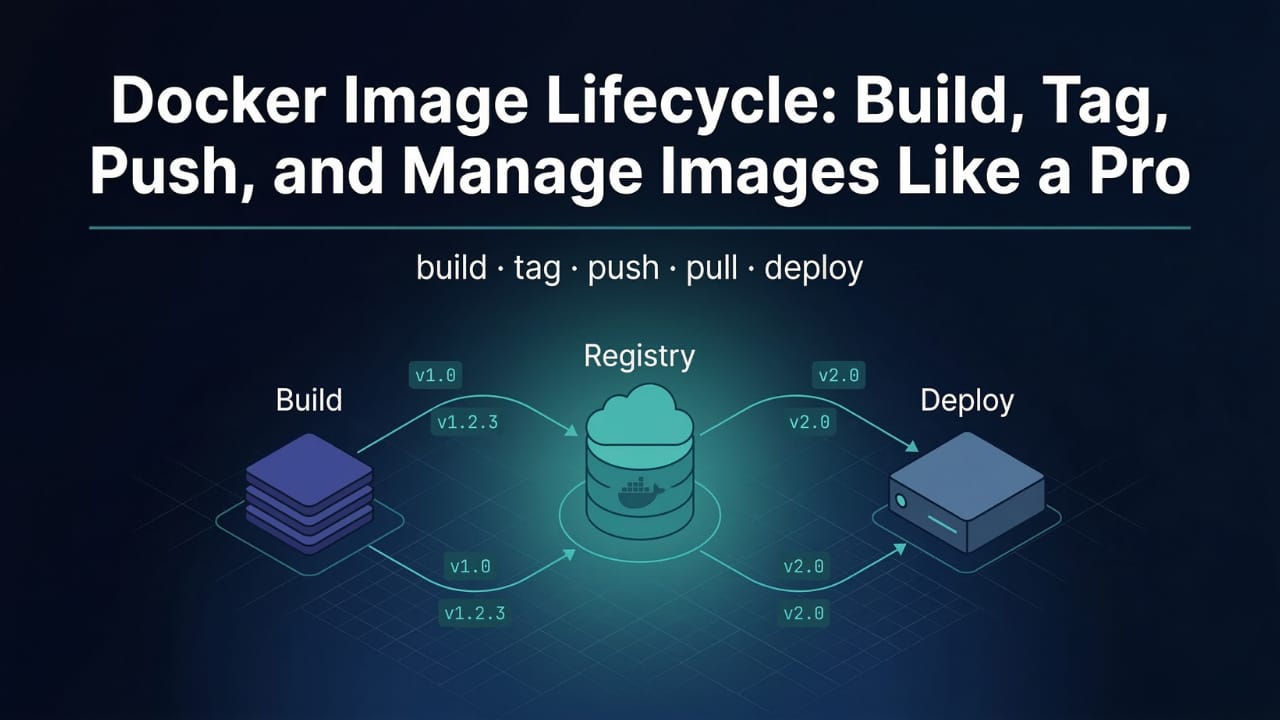

Writing a Dockerfile gets you halfway there. The other half is knowing what to do with it. Docker image lifecycle management covers everything that happens after you write your Dockerfile: building the image, naming and versioning it correctly, pushing it to a registry so others can pull it, managing what's stored locally, and understanding the complete flow from first build to production deployment.

This is where Docker starts feeling like a real part of a development workflow rather than just a local experiment. Once your image is on Docker Hub, anyone on your team can pull it, any CI/CD pipeline can deploy it, and any server in the world can run it with a single command. This guide walks through every step of that process, with the details on tagging strategy and the latest tag behavior that trips up a lot of developers.

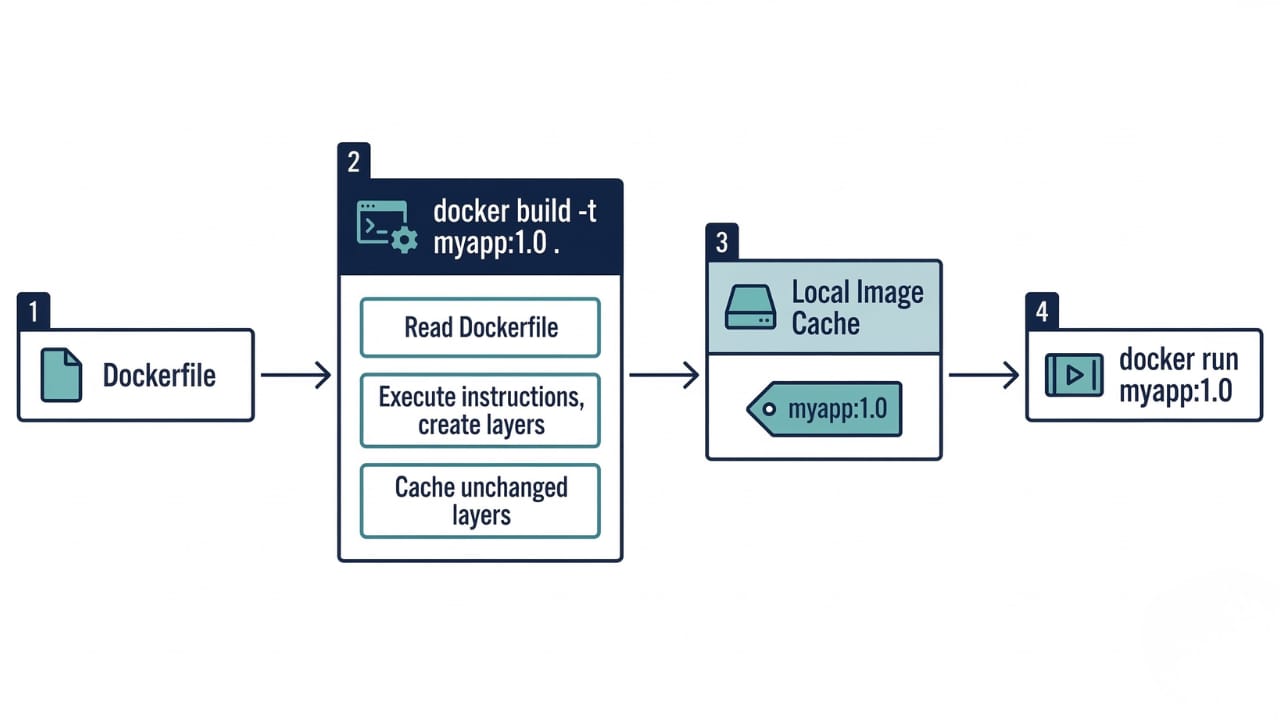

docker build: Creating Images from Dockerfiles

docker build reads your Dockerfile and executes each instruction in order, producing a tagged image you can run as containers. The basic syntax is:

docker build -t myapp:1.0 .Breaking that down:

docker buildis the command that triggers the build process-t myapp:1.0tags the resulting image with the namemyappand version1.0.is the build context, the directory Docker packages up and sends to the daemon, where it looks for the Dockerfile

What happens during a build:

Docker reads the Dockerfile line by line

Each instruction executes in order and produces a new image layer

Layers that haven't changed are pulled from cache (you'll see

CACHEDin the output)The final image is stored locally and tagged with whatever name you provided

Useful docker build flags beyond -t:

# Build from a Dockerfile in a different location

docker build -f Dockerfile.prod -t myapp:prod .

# Build with multiple tags at once

docker build -t myapp:1.0 -t myapp:latest .

# Pass a build argument

docker build --build-arg NODE_ENV=production -t myapp:1.0 .

# Force rebuild, ignore all cached layers

docker build --no-cache -t myapp:1.0 .

# Show a summary of build output without verbose layer details

docker build --quiet -t myapp:1.0 .The --no-cache flag is useful when you suspect a cached layer is stale, for example when a base image has received security updates but Docker doesn't know to re-pull it because the FROM instruction itself hasn't changed.

Understanding Build Context and .dockerignore

When you run docker build ., Docker packages the entire current directory and sends it to the daemon as the build context. Every file in that directory is potentially available to COPY instructions. The problem is that large directories slow down your builds significantly, even if most of those files are never actually copied into the image.

A typical Node.js project without a .dockerignore:

├── node_modules/ # Could be hundreds of MB

├── .git/ # Version history, never needed in image

├── logs/ # Log files, irrelevant to build

├── .env # Secrets, should never go in an image

├── coverage/ # Test coverage reports

├── Dockerfile

└── src/Without .dockerignore, Docker sends node_modules, .git, and all log files to the daemon on every build. That's potentially gigabytes of unnecessary data being transferred before a single instruction even runs.

The fix is a .dockerignore file in your project root, which follows the same syntax as .gitignore:

node_modules

.git

*.log

.env

coverage

.DS_Store

distWith this in place, docker build . only sends the files your image actually needs. Build context transfer drops from seconds to milliseconds. This matters especially in CI/CD environments where builds run on remote daemons and context transfer happens over a network.

A good mental model: .dockerignore is to docker build what .gitignore is to git commit. Both prevent unnecessary and sensitive files from going somewhere they don't belong.

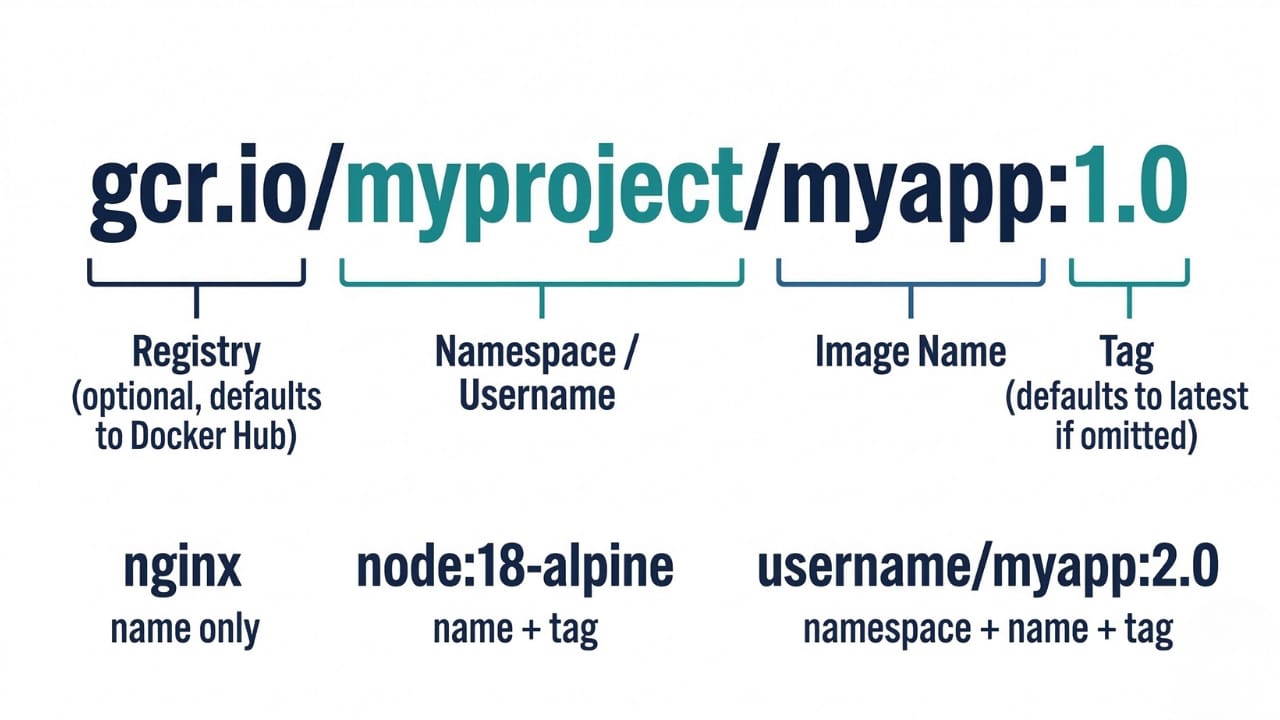

Image Naming and Tagging

Docker image names follow a specific format:

[REGISTRY/][NAMESPACE/]NAME[:TAG]In practice:

myapp # name only, tag defaults to 'latest'

myapp:1.0 # name with version tag

myapp:1.0.5 # name with patch version

node:18-alpine # official image with variant tag

dheerajchoudhary/myapp:1.0 # namespaced for Docker Hub

gcr.io/myproject/myapp:1.0 # full registry URL + namespace + tagTags are just labels pointing to a specific image. A single image can have multiple tags simultaneously. When you run docker tag, you're not copying the image, you're adding a new label that points to the same underlying image layers:

# Add a second tag to an existing image

docker tag myapp:1.0 myapp:latest

# Tag for Docker Hub (must prefix with your username)

docker tag myapp:1.0 username/myapp:1.0

# Tag multiple versions pointing to the same build

docker tag myapp:1.2.3 myapp:1.2

docker tag myapp:1.2.3 myapp:1

docker tag myapp:1.2.3 myapp:latestAll four of those tags point to the same image layers. Storage is not duplicated.

Tagging Strategy: Why latest Is Not What You Think

The

latesttag is one of the most misunderstood things in Docker. Most developers assume it means "the most recent version of the image." That assumption is wrong, and it causes real problems in production.latestis simply the tag Docker applies automatically when you push an image without specifying a tag. It's not dynamic. It doesn't automatically track the newest image. It just points to whatever was last pushed without an explicit tag.

Consider this scenario:

# Developer A pushes version 1.0.1

docker build -t myapp:1.0.1 .

docker push myapp:1.0.1

# latest is NOT updated because a tag was specified

# Developer B pushes without a tag

docker build -t myapp .

docker push myapp

# latest is NOW updated to this buildNow

myapp:latestpoints to Developer B's unversioned push, not version 1.0.1. If your deployment pullsmyapp:latest, it gets Developer B's build, not the newest versioned release.The other problem: tags can be changed. Unless you've configured your registry for immutable tags, a developer could accidentally push with the wrong tag, overwriting a previous release with a different build.

A solid tagging strategy for production:

Use semantic versioning with three levels of tags pointing to the same build:

# After building myapp version 1.2.3

docker tag myapp:1.2.3 username/myapp:1.2.3 # exact version (immutable reference)

docker tag myapp:1.2.3 username/myapp:1.2 # minor version (updates with patches)

docker tag myapp:1.2.3 username/myapp:1 # major version (updates with minor releases)

docker push username/myapp:1.2.3

docker push username/myapp:1.2

docker push username/myapp:1This pattern gives consumers flexibility: pinning to 1.2.3 for maximum stability, 1.2 for patch updates, or 1 for all non-breaking updates. Production deployments should always pin to an exact version like 1.2.3. CI/CD pipelines can also append git commit hashes for full traceability:

docker build -t username/myapp:1.2.3-ab3f9c2 .Managing Local Images

Over time, local image storage fills up. Knowing how to list, remove, and clean up images is part of daily Docker work.

Listing images

# List all locally stored images

docker images

# or the newer syntax

docker image lsOutput columns: REPOSITORY, TAG, IMAGE ID, CREATED, SIZE. The IMAGE ID is the first 12 characters of the image's SHA256 content digest.

# Filter by repository name

docker images myapp

# Show image digests (full SHA256 hash)

docker images --digests

# Show only image IDs

docker images -qRemoving images

# Remove a specific image by name and tag

docker rmi myapp:1.0

# or

docker image rm myapp:1.0

# Remove by image ID (first few characters are enough if unique)

docker rmi a1b2c3d4

# Force remove even if a container uses the image

docker rmi -f myapp:1.0You can't remove an image that a running container is using. Stop and remove the container first.

Cleaning up unused images

# Remove dangling images (untagged layers with no name)

docker image prune

# Remove ALL images not used by at least one container

docker image prune -a

# Remove with a filter (images older than 24 hours)

docker image prune -a --filter "until=24h"Dangling images are the untagged <none>:<none> entries you see in docker images. They're leftover intermediate layers from previous builds where the image was rebuilt with the same tag. They take up disk space and serve no purpose. docker image prune removes them without touching any tagged images.

For a more aggressive cleanup that removes stopped containers, dangling images, unused networks, and build cache all at once:

docker system prune

# Include all unused images, not just dangling ones

docker system prune -aIntroduction to Docker Hub

Docker Hub (hub.docker.com) is the default public registry for Docker images. Think of it as GitHub but for container images rather than source code. It serves two primary purposes: distributing official base images that developers build on top of, and hosting user-published images that teams and individuals share.

Docker Hub hosts two categories of images:

Official images are maintained by Docker in partnership with software vendors. Images like

nginx,postgres,redis,python,node, andubuntufall into this category. They follow security best practices, get regular updates, and use the short name format without a namespace prefix (justnginx, notsomeone/nginx).User and organization images are published under a namespace matching the Docker Hub account name, formatted as

username/imagename. These range from widely used community projects to private team images.Docker Hub's free tier allows unlimited public repositories and one private repository. Paid plans increase the private repository limit and add features like automated builds, vulnerability scanning, and team access controls.

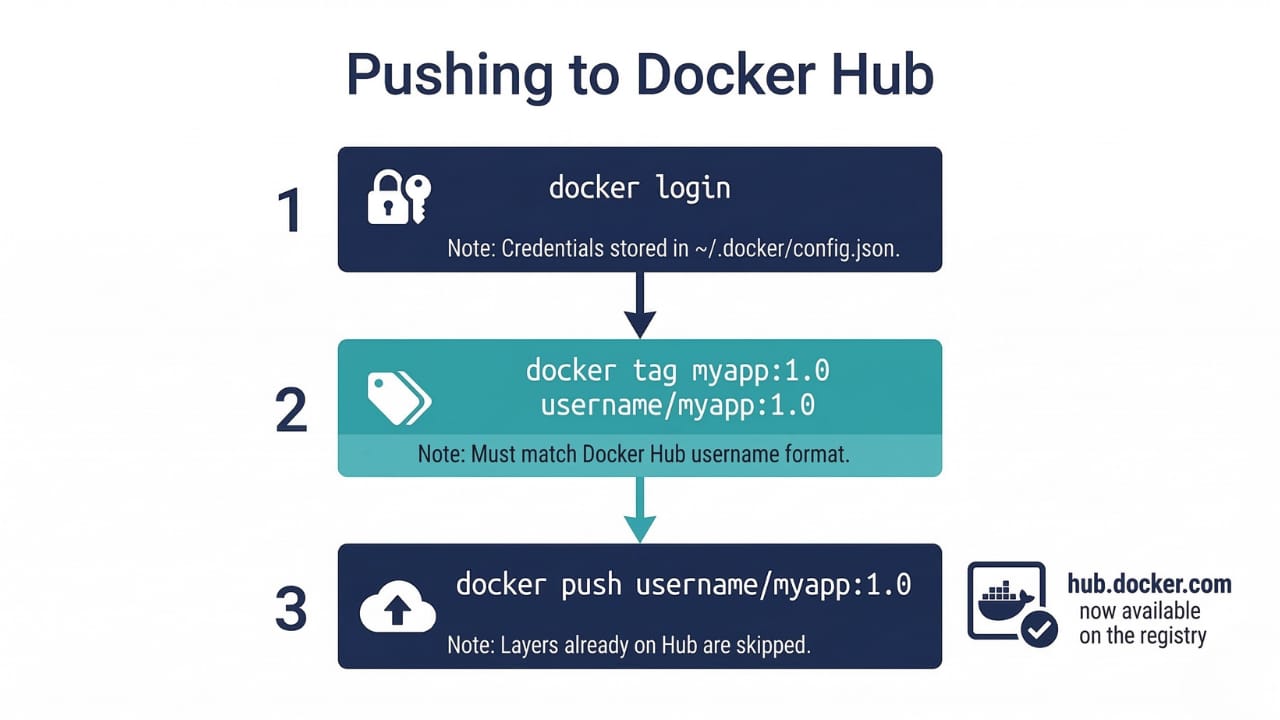

Pushing Images to Docker Hub

Three steps: log in, tag, push.

Step 1: Log in

docker login

# Prompts for Docker Hub username and password

# Or log in non-interactively (useful in CI/CD)

echo $DOCKER_PASSWORD | docker login -u $DOCKER_USERNAME --password-stdinUsing --password-stdin instead of -p prevents your password from appearing in shell history or process listings. In CI/CD pipelines, always use this form with credentials stored as environment secrets.

Your login credentials are stored in ~/.docker/config.json. To log out:

docker logoutStep 2: Tag with your Docker Hub username

Docker Hub requires the image name to match the format username/imagename. Your locally built image needs to be tagged in this format before it can be pushed:

docker tag myapp:1.0 dheerajchoudhary/myapp:1.0Or tag it correctly at build time to skip this step:

docker build -t dheerajchoudhary/myapp:1.0 .Step 3: Push

docker push dheerajchoudhary/myapp:1.0Docker pushes the image layer by layer. Layers that already exist on Docker Hub (because they're shared with other images, like a common Alpine base) are skipped with Layer already exists. Only new or changed layers are actually uploaded, which keeps push times fast.

To push multiple tags in one go:

docker push dheerajchoudhary/myapp:1.2.3

docker push dheerajchoudhary/myapp:1.2

docker push dheerajchoudhary/myapp:1

Pulling Images from Docker Hub

Anyone with access to a public repository can pull your image with no authentication required:

# Pull the latest tag

docker pull dheerajchoudhary/myapp

# Pull a specific version

docker pull dheerajchoudhary/myapp:1.0

# Pull an official image

docker pull postgres:16-alpineYou don't always need to pull explicitly. docker run pulls the image automatically if it isn't in the local cache. But explicit pulls are useful in CI/CD pipelines to pre-warm the local cache, or to update a specific tag you already have locally.

To check whether your local image matches what's on the registry, compare the image digests:

# Show local image digest

docker images --digests dheerajchoudhary/myapp

# Pull always fetches the latest version of the tag from the registry

docker pull dheerajchoudhary/myapp:1.0Private Registries: Beyond Docker Hub

Docker Hub works well for public images and small teams. For production workloads, most organizations use a private registry that integrates with their cloud infrastructure and security tooling.

The main options:

AWS Elastic Container Registry (ECR) integrates with AWS IAM for authentication, meaning no separate credentials to manage if you're already on AWS. Images are stored in the same region as your compute resources, reducing pull latency.

Google Artifact Registry is the current recommended registry for Google Cloud, replacing the older Container Registry. Supports Docker images alongside other artifact types.

Azure Container Registry (ACR) integrates with Azure Active Directory and Azure Kubernetes Service.

Harbor is a self-hosted open-source registry with vulnerability scanning, image signing, role-based access control, and replication between registries. The right choice when you need full control over where images are stored.

Pushing to a private registry follows the same pattern as Docker Hub, with the registry URL prepended to the image name:

# AWS ECR

docker tag myapp:1.0 123456789.dkr.ecr.us-east-1.amazonaws.com/myapp:1.0

docker push 123456789.dkr.ecr.us-east-1.amazonaws.com/myapp:1.0

# Google Artifact Registry

docker tag myapp:1.0 us-central1-docker.pkg.dev/myproject/myrepo/myapp:1.0

docker push us-central1-docker.pkg.dev/myproject/myrepo/myapp:1.0The Complete Image Lifecycle: Dockerfile to Deployment

Here's the complete workflow, from nothing to a running deployment on a remote machine:

# 1. Write your Dockerfile (in your project root)

vim Dockerfile

# 2. Build the image locally

docker build -t myapp:1.0 .

# 3. Test it locally before pushing anywhere

docker run -p 8080:3000 myapp:1.0

# Open http://localhost:8080 and verify it works

# 4. Tag with your Docker Hub username

docker tag myapp:1.0 username/myapp:1.0

# 5. Log in and push to Docker Hub

docker login

docker push username/myapp:1.0

# 6. On any other machine (teammate's laptop, CI server, production VM)

docker pull username/myapp:1.0

# 7. Run in production

docker run -d -p 80:3000 --name myapp-prod username/myapp:1.0Every step in this workflow is deterministic. The image you tested locally in step 3 is byte-for-byte identical to what runs in step 7. That's the entire value proposition of Docker image management: the artifact you build is the artifact you deploy, with no environment-specific differences anywhere in the chain.

Key Takeaways

docker build -t name:tag .builds an image from the Dockerfile in the current directory. The.is the build context, which is everything Docker canCOPYfromAlways use a

.dockerignorefile to excludenode_modules,.git,.env, and other unnecessary files from the build context. Large contexts slow builds significantlyImage names follow the format

[registry/][namespace/]name[:tag]. Tags default tolatestif omittedlatestis not automatically the newest version of an image. It's simply the last image pushed without an explicit tag. Never rely onlatestin production deploymentsUse semantic versioning for tags (

1.2.3,1.2,1) and pin to exact versions in production. Tag the same build with multiple version levels for flexible consumptiondocker imageslists local images.docker rmiremoves them.docker image pruneremoves dangling untagged layers.docker system prune -ais the nuclear option for reclaiming disk spacePushing to Docker Hub requires three steps:

docker login,docker tagwith your username prefix, thendocker pushUse

--password-stdinin CI/CD pipelines instead of-pto prevent credentials appearing in logs or process listingsFor production workloads, private registries like AWS ECR, Google Artifact Registry, Azure ACR, or self-hosted Harbor are standard. The push/pull workflow is identical, just with the registry URL prepended to the image name

The complete image lifecycle is: write Dockerfile → build → test locally → tag → push → pull → deploy. The image that passes local testing is identical to what runs in production

Conclusion

Image lifecycle management is what takes Docker from a local development tool to a production deployment system. The build, tag, and push workflow is straightforward, but the details around tagging strategy, build context optimization, and registry choice make a meaningful difference in team workflow and deployment reliability.

The

latesttag trap alone has caused enough production incidents that it's worth treating as a firm rule: always use explicit version tags in production, always. Everything else in this guide compounds on that foundation, from keeping build contexts lean with.dockerignoreto using private registries with proper authentication in CI/CD pipelines.The natural next topic after this is Docker Compose, which takes individual images and orchestrates multi-container applications where a web server, a database, and a cache all run together as a coordinated stack.

🔗Let’s Stay Connected

📱 Join Our WhatsApp Community

Get early access to AI/ML, Cloud & Devops resources, behind-the-scenes updates, and connect with like-minded learners.

➡️ Join the WhatsApp Group

✅ Follow Me for Daily Tech Insights

➡️ LinkedIN

➡️ YouTube

➡️ X (Twitter)

➡️ Website