👋 Hey there, I’m Dheeraj Choudhary an AI/ML educator, cloud enthusiast, and content creator on a mission to simplify tech for the world.

After years of building on YouTube and LinkedIn, I’ve finally launched TechInsight Neuron a no-fluff, insight-packed newsletter where I break down the latest in AI, Machine Learning, DevOps, and Cloud.

🎯 What to expect: actionable tutorials, tool breakdowns, industry trends, and career insights all crafted for engineers, builders, and the curious.

🧠 If you're someone who learns by doing and wants to stay ahead in the tech game you're in the right place.

Introduction

If you've ever heard someone say "it works on my machine" and watched a team spend hours debugging an environment mismatch, you already understand the core problem Docker solves. Docker is an open-source platform that packages applications into self-contained units called containers, so your code runs the same way on a laptop, a CI server, or a cloud VM without any manual configuration gymnastics.

Docker was introduced in 2013 and fundamentally changed how developers think about shipping software. Instead of handing off code and a list of setup instructions, you hand off a container that already has everything built in. The environment becomes part of the artifact. That's the idea. It's simple in concept but surprisingly deep once you start working with it, so this guide covers everything from what a container actually is to installing Docker and running your first one.

What is Docker

Docker is an open-source platform for building, shipping, and running applications in containers. Think of it like a shipping container in the physical world. Before standardized shipping containers, loading cargo onto a ship was slow and labor-intensive because every item had a different shape and needed special handling. Standardized containers changed that. It didn't matter what was inside the box; the shape was always the same, and every ship, crane, and truck knew how to handle it.

Docker does exactly that for software. It wraps up your application, its dependencies, configuration, libraries, and runtime into a single standardized unit. That unit behaves the same way no matter where it runs.

A few facts worth knowing upfront:

- Docker is open-source, maintained by Docker Inc., with a massive community of contributors

- Containers are not a new idea (Linux has had them for decades), but Docker made them practical and accessible starting in 2013

- Docker Desktop is available for Windows, Mac, and Linux as an easy installation option for local development

- Docker Hub is the default public registry where thousands of pre-built images are freely available to pull and use

What Is a Container?

A container is a lightweight, isolated process running on your host machine. That definition sounds dry, so let's make it concrete.

When you run a container, Docker creates an isolated environment using two Linux kernel features: namespaces and control groups (cgroups). Namespaces give the container its own view of the system: its own process tree, network interfaces, filesystem mounts, and hostname. From inside the container, it looks like it's running on its own machine. Control groups limit how much CPU, memory, and disk I/O the container can consume, so it can't accidentally starve other processes on the host.

The result is something that feels like a tiny virtual machine but shares the host OS kernel directly. No second OS boot. No hypervisor overhead. Just your application running in its own isolated slice of the existing system.

A good analogy is an apartment building. Each apartment has its own space, locks, utilities, and residents. The tenants don't interfere with each other. But they all share the same foundation, plumbing infrastructure, and building structure. That shared foundation is the OS kernel. Containers are the apartments.

Key properties of a container:

- Starts in seconds (sometimes milliseconds), because there's no full OS to boot

- Portable: the same container image works on any Docker-capable host

- Isolated from other containers and the host, even though they share the kernel

- Defined by an image, which is a read-only, layered snapshot of the filesystem

A container is not the image itself. The image is the blueprint. The container is the running instance. You can create dozens of containers from the same image, just like you can build many apartments from the same architectural plan.

How Docker Works: The Architecture Behind It

Docker uses a client-server architecture. Understanding it helps you reason about what's actually happening when you type a Docker command in your terminal.

Docker Client

The Docker Client is the CLI you interact with. When you type docker run nginx, the client translates that into an API request and forwards it to the Docker Daemon. The client and daemon can run on the same machine or on different ones.

Docker Daemon (dockerd)

The Docker Daemon is the background process that does all the actual work. It manages images, containers, networks, and volumes. It listens for API requests from the Docker Client over a Unix socket or a network interface. The daemon communicates with the container runtime (containerd, which in turn uses runc) to actually create and run containers using Linux namespaces and cgroups.

Docker Images

An image is a read-only, layered template built from a Dockerfile. Each instruction in the Dockerfile adds a new immutable layer on top of the previous one. When you run a container, Docker adds a thin writable layer on top of those image layers, and that becomes your running container's filesystem. Images themselves never change. This layering is efficient: if multiple images share a common base layer (like Ubuntu or Alpine), that layer is stored only once on disk.

Docker Registry

A registry is where images are stored and distributed. Docker Hub is the default public registry, with thousands of official images from organizations like Nginx, PostgreSQL, Redis, and Node.js. When you run docker pull postgres, Docker fetches the image from Docker Hub unless you've specified a different registry.

Organizations that need to keep images private typically use managed options: AWS Elastic Container Registry (ECR), Google Artifact Registry, Azure Container Registry, or the self-hosted open-source Harbor.

The Full Request Flow

Here's what happens when you run docker run nginx:

The Docker Client sends the request to dockerd via REST API

dockerd checks if the

nginximage exists in the local image cacheIf not found, dockerd pulls the image from Docker Hub

dockerd passes the image to containerd, which uses runc to create the container

runc sets up Linux namespaces and cgroups for isolation

The container starts and nginx begins serving

Docker vs. Virtual Machines

This comparison comes up in every Docker conversation. Both containers and virtual machines let you run isolated environments on a single host machine. The critical difference is what each one virtualizes.

Virtual machines virtualize hardware. A hypervisor (VMware, VirtualBox, KVM, Hyper-V) sits between the physical hardware and the VMs. Each VM gets a virtual CPU, virtual RAM, virtual disk, and runs its own full guest operating system on top. That means each VM carries gigabytes of OS overhead, takes minutes to boot, and consumes significant resources even when sitting idle.

Containers virtualize the operating system. They share the host OS kernel directly. No hypervisor in between. No guest OS. The container carries only the application and its dependencies. Image sizes are typically in the range of megabytes instead of gigabytes, and startup time drops from minutes to seconds or less.

Here's a direct comparison:

| Virtual Machine | Docker Container | |

|---|---|---|

| Size | GBs (includes full OS) | MBs (app + dependencies only) |

| Startup time | Minutes | Seconds or milliseconds |

| OS kernel | Own guest OS per VM | Shared host OS kernel |

| Isolation | Strong (hardware-level) | Good (OS-level, via namespaces) |

| Resource usage | Heavy | Lightweight |

| Portability | Lower (OS-dependent images) | High (runs on any Docker host) |

Neither is universally better. VMs offer stronger isolation and support different guest operating systems on the same host, which matters for certain security requirements and mixed-OS workloads. Containers are faster, lighter, and more practical for microservices and CI/CD pipelines. In production, many teams run Docker containers inside VMs to combine the security boundary of VM-level isolation with the density benefits of containerization.

Why Teams Adopt Docker

The "it works on my machine" problem is the most famous one, but it's far from the only reason Docker has become a standard tool in modern development workflows.

Environment Consistency

A Docker image captures the entire runtime environment, not just the code. The same image runs in local development, testing, staging, and production. No more surprises caused by different library versions, OS patch levels, or configuration drift between environments. When a bug appears in production but not in development, Docker significantly narrows the list of possible causes.

Dependency Isolation

Imagine running two Python projects on the same machine: one needs Python 3.8 and an older version of a data library, the other needs Python 3.12 and a newer one. Without containers, you're wrestling with virtual environments and hoping nothing conflicts. With Docker, each container carries its own isolated dependencies. They never see each other.

Faster Developer Onboarding

Setting up a new development environment used to mean spending a full day following documentation that was probably already out of date. With Docker, a new team member runs docker compose up and the entire application stack spins up automatically. This compounds across a team and genuinely affects how fast people become productive.

Microservices Architecture

Modern applications are often broken into small, independently deployable services. Each service can run in its own container with its own dependencies, scale independently, and be updated without touching the rest of the system. Docker is a natural fit for this pattern, and it pairs well with orchestration tools like Kubernetes when the scale gets larger.

CI/CD Pipelines

Docker integrates cleanly into continuous integration and delivery workflows. Your CI runner pulls your Docker image, runs tests inside the container, and pushes a new image if everything passes. The build artifact is the image itself, already tested in an environment that matches production.

Cost Efficiency

Because containers share the host OS kernel rather than running full guest operating systems, you can run far more workloads on the same hardware compared to VMs. That translates to real infrastructure cost savings at scale, particularly in cloud environments where compute costs are directly tied to resource consumption.

Installing Docker on Any Platform

Installation varies by platform, but the end result is the same: a running Docker daemon and the docker CLI available in your terminal.

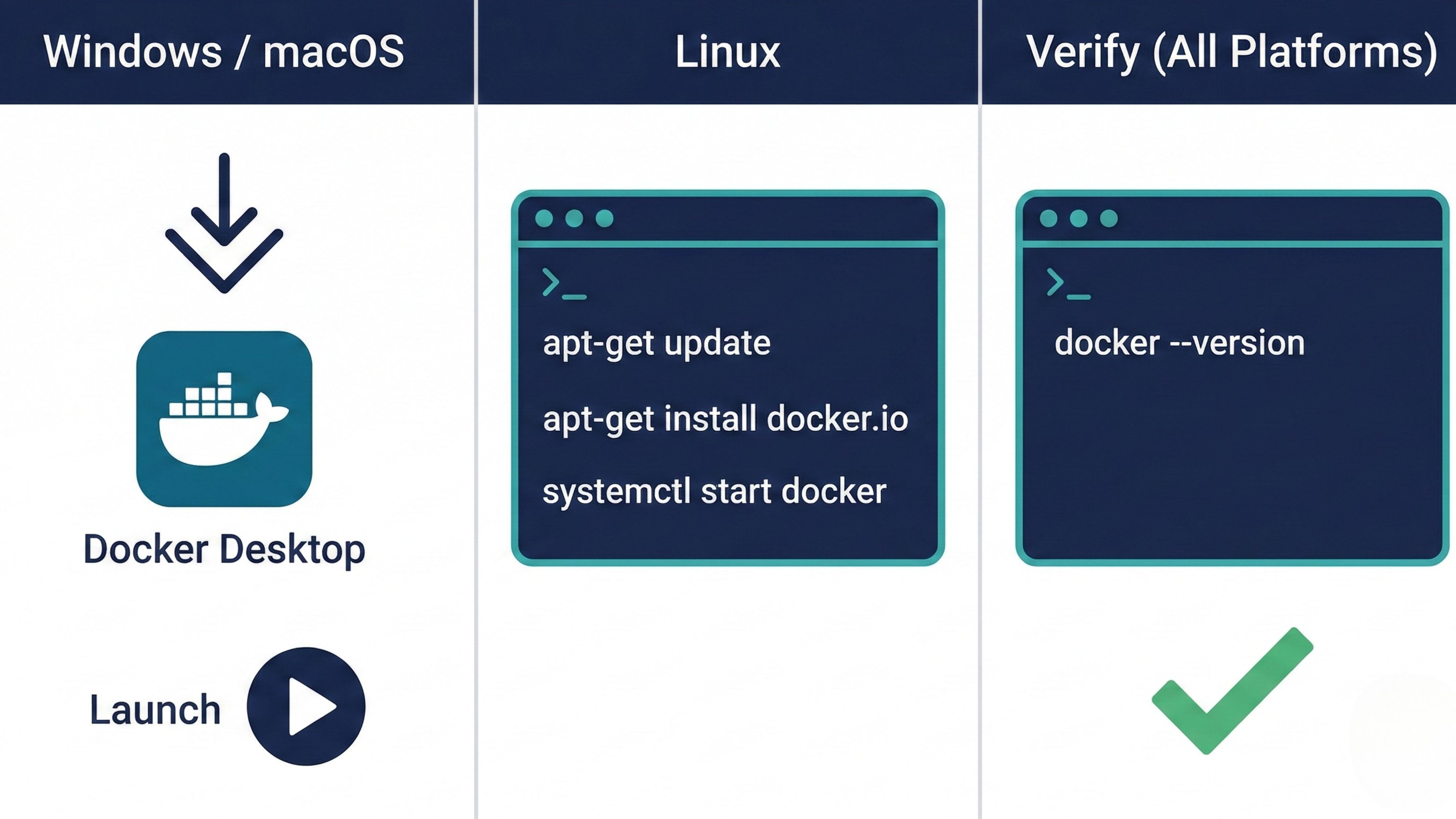

Windows and macOS

Download Docker Desktop from docker.com. Docker Desktop bundles the Docker daemon, the Docker CLI, Docker Compose, and a graphical interface for managing containers and images. On Windows, it runs on top of WSL 2 (Windows Subsystem for Linux 2) or Hyper-V. On macOS, it runs inside a lightweight Linux VM managed transparently in the background.

Steps:

Download the Docker Desktop installer from docker.com

Run the installer and follow the setup wizard

Restart your computer if prompted

Launch Docker Desktop from your applications

Accept the license agreement on first launch

Docker Desktop is free for personal use, open-source projects, and small businesses. Teams at larger organizations require a paid subscription.

Linux (Ubuntu/Debian)

On Linux, Docker Engine installs directly without the Desktop GUI. The quickest path to getting started:

Convenience script:

curl -fsSL https://get.docker.com -o get-docker.sh

sudo sh get-docker.shManual install via apt:

sudo apt-get update

sudo apt-get install -y docker.io

sudo systemctl start docker

sudo systemctl enable dockerTo run Docker commands without sudo, add your user to the docker group:

sudo usermod -aG docker $USER

newgrp dockerNote: Adding a user to the docker group grants root-equivalent permissions for Docker operations. On shared or production servers, be deliberate about who gets this access.

Verify the Installation

On any platform, confirm Docker is installed:

docker --versionYou should see output like Docker version 27.x.x, build xxxxxxx. To confirm the daemon is running and responsive:

docker infoIf both commands return output without errors, your installation is complete.

Your First Docker Command

With Docker installed, here's the canonical first container to run:

docker run hello-worldHere's exactly what happens when you execute this:

The Docker Client sends the

run hello-worldrequest to the daemonThe daemon checks if the

hello-worldimage exists in the local cacheIt doesn't exist yet, so the daemon pulls it from Docker Hub automatically

The daemon creates a new container from the downloaded image

The container runs, outputs a welcome message to your terminal, and then exits cleanly

You'll see output starting with Hello from Docker! followed by a breakdown of the steps Docker just performed internally. That output confirms your full installation is working end-to-end: client, daemon, and registry all communicating correctly.

A Few More Commands to Try

# See running containers

docker ps

# See all containers, including stopped ones

docker ps -a

# List images you've downloaded

docker images

# Run an interactive Ubuntu container

docker run -it ubuntu bash

# Remove a stopped container

docker rm <container_id>

# Pull an image without running it

docker pull nginxThe docker run -it ubuntu bash command is worth trying early. The -it flags attach an interactive terminal. ubuntu is the image. bash is the process to run inside the container. You'll drop into a bash shell inside a fully isolated Ubuntu environment. Type exit to stop the container and return to your host shell. Nothing you did inside that container affected your actual machine.

Key Takeaways

Docker is an open-source containerization platform that packages applications into portable, isolated units called containers

Containers share the host OS kernel via Linux namespaces and cgroups, making them far lighter and faster to start than virtual machines

Docker uses a client-server architecture: the CLI sends commands to the Docker Daemon (dockerd), which manages images, containers, networks, and volumes

Docker images are read-only, layered templates. Containers are the live, writable instances created from those images

Docker Hub is the default public registry with thousands of ready-to-use images available immediately

Docker Desktop is the easiest way to get started on Windows and macOS; Docker Engine installs directly on Linux

docker run hello-worldvalidates a full installation by exercising the client, daemon, and registry togetherContainers and virtual machines are not competitors in every context: VMs offer hardware-level isolation; containers offer speed and density. Most production environments use both

Conclusion

Docker changes the relationship between an application and the environment it runs in. By treating the runtime as part of the build artifact, it eliminates a whole category of environment-related bugs that used to cost teams significant time. The tradeoff is learning a new mental model, but that model becomes intuitive quickly once you've run your first few containers.

This guide covers the foundation: what Docker is, how containers differ from VMs, how the client-daemon-registry architecture fits together, and how to get installed on any platform. From here, the natural next steps are writing a Dockerfile to containerize your own applications, learning Docker Compose for multi-container setups, and eventually exploring how Kubernetes builds on these same fundamentals for orchestration at scale.

The best way to get comfortable with Docker is to use it. Start with docker run hello-world, then pull a real image like postgres or nginx and run it locally. The faster you get hands on with containers, the faster everything else clicks.

🔗Let’s Stay Connected

📱 Join Our WhatsApp Community

Get early access to AI/ML, Cloud & Devops resources, behind-the-scenes updates, and connect with like-minded learners.

➡️ Join the WhatsApp Group

✅ Follow Me for Daily Tech Insights

➡️ LinkedIN

➡️ YouTube

➡️ X (Twitter)

➡️ Website