👋 Hey there, I’m Dheeraj Choudhary an AI/ML educator, cloud enthusiast, and content creator on a mission to simplify tech for the world.

After years of building on YouTube and LinkedIn, I’ve finally launched TechInsight Neuron a no-fluff, insight-packed newsletter where I break down the latest in AI, Machine Learning, DevOps, and Cloud.

What to expect: actionable tutorials, tool breakdowns, industry trends, and career insights all crafted for engineers, builders, and the curious.

If you're someone who learns by doing and wants to stay ahead in the tech game you're in the right place.

Introduction

Two containers running on the same machine cannot talk to each other by default. A container cannot be reached from the outside world unless you explicitly configure it. And yet a web app container needs to reach its database container, and users need to reach the web app. Docker networking is what makes all of that possible.

Docker's networking model is one of its more powerful features, and also one that's easiest to get wrong when you're starting out. The default behavior looks like it works, until you try to connect two containers by name and discover that the default bridge network doesn't do DNS. Or you try to use the host network on a Mac and find it isn't supported. Or you deploy to a multi-node cluster and realize bridge networking doesn't span hosts at all.

This guide covers every network driver Docker ships with, explains what actually happens under the hood when containers communicate, and walks through the right network setup for multi-container applications, with a complete practical example at the end.

How Container Networking Works

Each container runs in its own network namespace, a Linux kernel feature that gives it an isolated view of the network stack: its own interfaces, routing tables, and firewall rules. From inside a container, it looks like the container is the only thing on the network.

When Docker starts, it creates a virtual network bridge called

docker0on the host. This is a software-based Ethernet switch that lives inside the Linux kernel. When a container starts and connects to the default bridge network, Docker creates a virtual Ethernet pair: one end goes into the container (typically namedeth0inside the container), and the other end connects todocker0on the host. Traffic between containers on the same bridge flows throughdocker0. Traffic to the internet goes through the host's network interface via NAT masquerading.This is why containers can reach the internet by default (outbound traffic works through the host's connection), but external traffic can't reach a container unless you explicitly publish a port.

Docker Network Drivers: The Complete List

Docker ships with several built-in network drivers. Each is suited to a different connectivity scenario.

Driver | Scope | Use Case |

|---|---|---|

bridge | Single host | Default. Container-to-container on same host |

host | Single host | No isolation, container shares host network |

overlay | Multi-host | Containers across multiple Docker hosts (Swarm) |

ipvlan | Single host | Full control over Layer 2 and Layer 3 addressing |

macvlan | Single host | Container appears as physical device on network |

none | Single host | Complete network isolation |

For the vast majority of single-host Docker work, you'll use bridge networks (default or custom). For multi-host deployments with Docker Swarm, overlay is the driver to reach for. Host and none are specialized tools for specific situations.

The Default Bridge Network

When Docker installs, it automatically creates three networks:

docker network ls

# NETWORK ID NAME DRIVER SCOPE

# 17e324f45964 bridge bridge local

# 6ed54d316334 host host local

# 7092879f2cc8 none null localThe bridge network is the default. Every container you start without specifying a --network flag automatically connects to it.

docker run -d --name web nginx

docker run -d --name db mysql:8

# Both connect to the default bridge automatically

# Both get an IP in the 172.17.0.0/16 rangeContainers on the default bridge can communicate with each other using IP addresses. They can also reach the internet through the host. But they cannot be reached from outside the host unless you publish ports with -p.

# Find each container's IP on the default bridge

docker inspect web --format '{{.NetworkSettings.IPAddress}}'

# 172.17.0.2

docker inspect db --format '{{.NetworkSettings.IPAddress}}'

# 172.17.0.3

# From inside the web container, db is reachable at 172.17.0.3

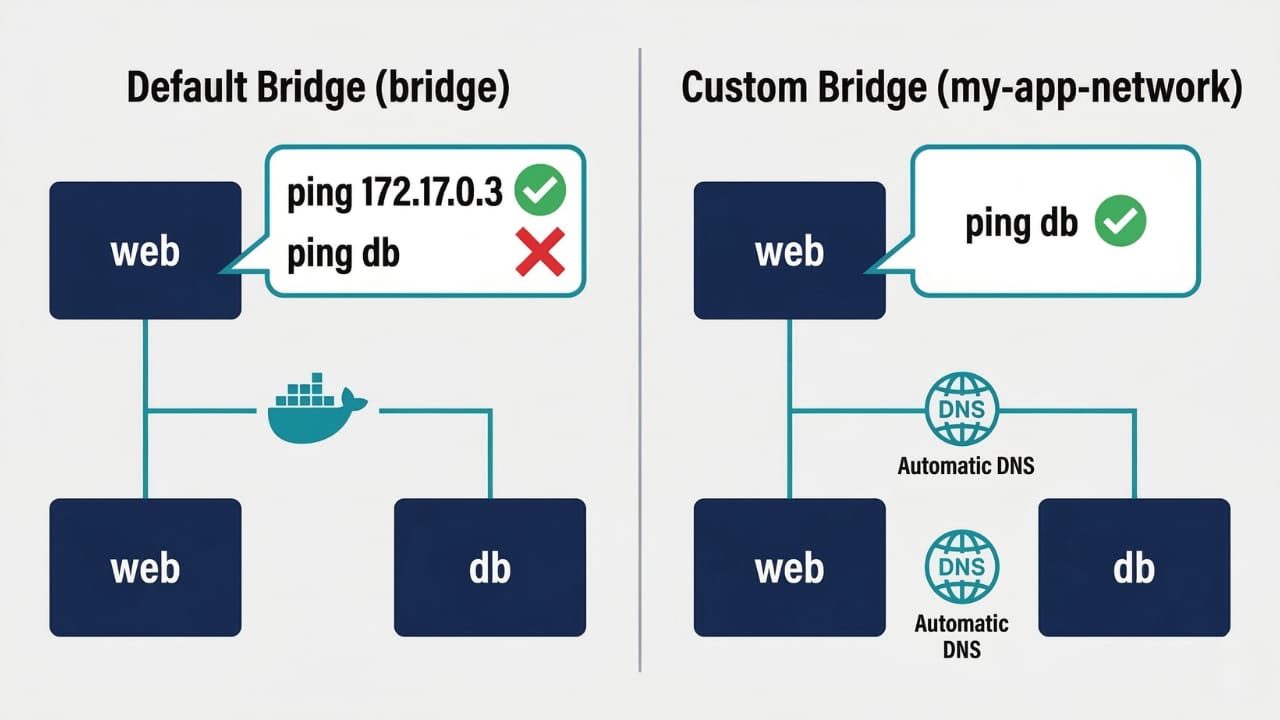

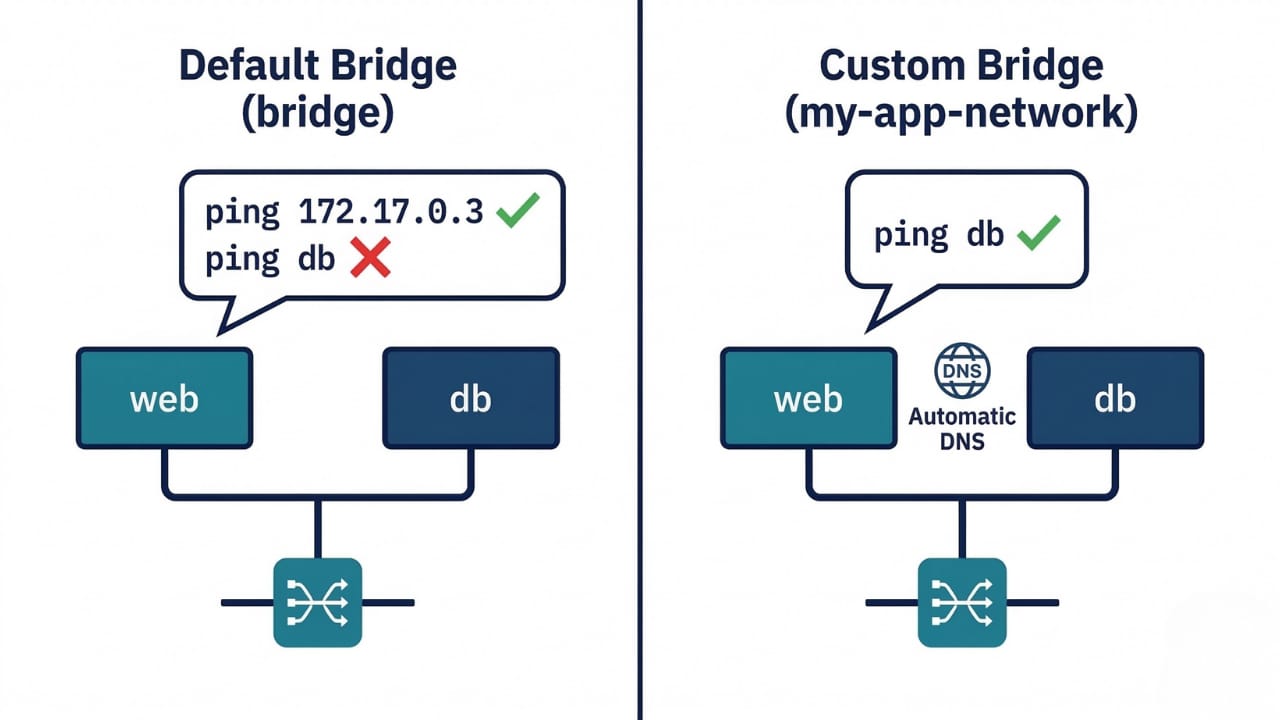

# But NOT at hostname "db"Why the Default Bridge Is Not Enough

The default bridge network has one critical limitation: no automatic DNS resolution. Containers can only reach each other by IP address, not by name.

This is a significant problem in practice. IP addresses on the default bridge are assigned dynamically. If you remove and recreate a container, it gets a different IP. Hardcoding IPs into application configuration is fragile and breaks constantly.

The official Docker documentation is clear on this point: containers on the default bridge network can only access each other by IP addresses, unless you use the

--linkoption, which is considered legacy. On a user-defined bridge network, containers can resolve each other by name or alias.There's also a security consideration. The default bridge network gives all containers on it unrestricted access to each other. You can't easily isolate groups of containers that shouldn't communicate. On custom networks, you get fine-grained control: containers can only reach other containers on the same network.

The right solution for any multi-container application is a custom bridge network. Never use the default bridge for production work.

Custom Bridge Networks: The Right Way

Creating a custom bridge network takes one command:

docker network create my-app-networkBy default this creates a bridge network. You can be explicit about the driver:

docker network create --driver bridge my-app-networkYou can also customize the subnet and gateway if you need to avoid conflicts with other networks on the host:

docker network create \

--driver bridge \

--subnet 192.168.10.0/24 \

--gateway 192.168.10.1 \

my-app-networkTo connect containers to this network, use the --network flag when starting them:

docker run -d --name db --network my-app-network mysql:8

docker run -d --name web --network my-app-network nginxNow the web container can reach db using the hostname db, and vice versa. No IP addresses. No hardcoding. If you recreate the db container, web can still reach it at db regardless of what IP it gets assigned.

How Docker DNS Works on Custom Networks

When you create a custom network, Docker starts an embedded DNS server for that network. Every container that joins the network is automatically registered with this DNS server using its container name as the hostname.

When a container makes a DNS lookup for another container's name (like db), the request goes to Docker's embedded DNS server at 127.0.0.11 inside the container. The DNS server resolves the name to the target container's current IP on that network and returns it. The calling container connects to that IP.

# From inside the web container on a custom network

cat /etc/resolv.conf

# nameserver 127.0.0.11 ← Docker's embedded DNS server

# options ndots:0

# This resolves correctly

ping db

# PING db (172.18.0.2): 56 data bytes ...This also means that if you recreate the db container and it gets a new IP, the DNS server automatically returns the new IP on the next lookup. Your application config pointing to db:3306 never needs to change.

You can also give a container additional aliases on a network, so it's reachable under multiple names:

docker run -d \

--name mysql-primary \

--network my-app-network \

--network-alias db \

--network-alias database \

mysql:8Now the container is reachable as mysql-primary, db, or database from other containers on my-app-network.

Host Network: Maximum Performance, No Isolation

The host network driver removes all network isolation between the container and the Docker host. The container doesn't get its own network namespace. It shares the host's network stack directly, with the same IP address, the same interfaces, and the same port space.

docker run -d --network host nginxWith host networking, nginx listens on port 80 of the host directly. There's no

-pflag needed, and no NAT translation. The port is just open on the host.The performance advantage is real. NAT translation and the virtual bridge add overhead. For network-intensive workloads like high-throughput proxies, network monitoring tools, or latency-sensitive applications, host networking can provide measurably better performance.

The tradeoffs are significant though. There's no network isolation at all. The container can see and potentially interfere with all network interfaces on the host. Port conflicts become your problem: if the host is already using port 80, an nginx container in host mode will fail to start. And host network mode only works on Linux. On Docker Desktop for Mac and Windows, Docker runs inside a lightweight Linux VM, and

--network hostgives the container host access to that VM's network, not your actual Mac or Windows network, which is almost never what you want.

# Linux only - container shares host's network directly

docker run -d --network host nginx

# nginx is now accessible at host-ip:80 directly

# On Mac/Windows - this does NOT expose to your host machine

# It only exposes to the Docker Desktop VM

docker run -d --network host nginxUse host networking only when you have a specific performance requirement that bridge networking can't meet, and only on Linux hosts.

None Network: Complete Isolation

The none driver gives the container a network namespace with no interfaces except the loopback interface (lo). The container cannot communicate with anything, including the host and other containers.

docker run -d --network none myappInside such a container, ping google.com fails. curl fails. No outbound traffic, no inbound traffic. The only thing that works is communication within the container itself (localhost).

This is useful for batch processing containers that read input from a mounted volume, process it, write output to another volume, and have no legitimate reason to make network calls. Disabling networking entirely reduces the attack surface.

Overlay Networks: Multi-Host Communication

Bridge networks are scoped to a single Docker host. Two containers on different machines cannot communicate through a bridge network. Overlay networks solve this.

An overlay network creates a virtual flat network that spans multiple Docker hosts, each running Docker Engine. Containers on different hosts but connected to the same overlay network can communicate directly using container names, just like they would on a custom bridge network on a single host.

Overlay networks require Docker Swarm mode to be initialized, even if you're just using them for standalone container communication:

# Initialize swarm on the first host

docker swarm init

# Create an overlay network

docker network create --driver overlay --attachable my-overlay

# The --attachable flag allows standalone containers (not just swarm services) to connectOnce the overlay network exists, containers on any host in the swarm that join the network can communicate with each other. Docker handles the encapsulation and routing of traffic between hosts transparently.

Automatic DNS container discovery works with overlay networks, but only with unique container names. If two containers on different hosts have the same name, DNS resolution becomes ambiguous.

Overlay networks are the foundation of Docker Swarm's service mesh. When you deploy services in Swarm mode, they connect to overlay networks, and Swarm handles load balancing across service replicas automatically.

Network Management Commands

# List all networks

docker network ls

# Inspect a network (shows containers, IP ranges, driver)

docker network inspect my-app-network

# Create a network

docker network create my-app-network

# Create with specific subnet and gateway

docker network create \

--subnet 10.10.0.0/16 \

--gateway 10.10.0.1 \

my-app-network

# Connect a running container to a network

docker network connect my-app-network my-container

# Disconnect a running container from a network

docker network disconnect my-app-network my-container

# Remove a network (fails if any container is connected)

docker network rm my-app-network

# Remove all networks not used by any container

docker network prunedocker network inspect is particularly useful for debugging. It shows every container connected to a network, each container's IP address on that network, the subnet, gateway, and driver details:

docker network inspect my-app-network

# Returns JSON with:

# - Network driver and scope

# - Subnet and gateway

# - Each connected container with its name and IP

# - Network options and labelsPort Publishing: Exposing Containers to the Outside

Containers on bridge networks are isolated from the external world by default. To make a container's port accessible from outside the host, you publish it with -p:

# Map host port 8080 to container port 80

docker run -d -p 8080:80 nginx

# Map host port 5432 to container port 5432

docker run -d -p 5432:5432 postgres

# Bind to a specific host interface (more secure)

docker run -d -p 127.0.0.1:8080:80 nginx

# Only accessible from localhost, not from the network

# Let Docker pick a random available host port

docker run -d -p 80 nginx

# docker port mycontainer 80 to find which port was assignedThe format is always

host-port:container-port. The container-side port is where your application listens inside the container. The host-side port is what the outside world uses to reach it.Binding to

127.0.0.1instead of all interfaces is a good security practice for development. It prevents a database port accidentally being accessible from your local network.Port publishing is only necessary for external access. Container-to-container communication within the same custom network happens directly on the internal network without any port publishing. The

webcontainer can reachdb:3306without3306ever being published to the host.

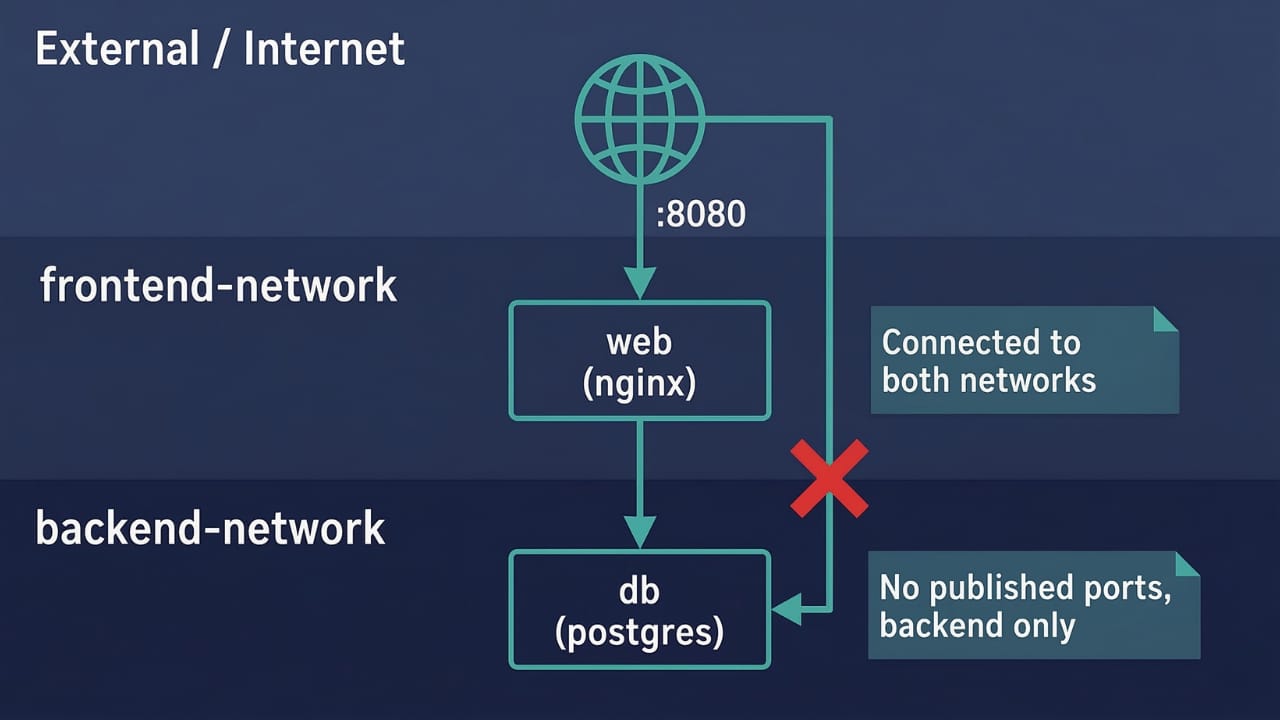

Connecting a Container to Multiple Networks

A container can be connected to more than one network simultaneously. This is useful for architectures where some containers should be accessible from outside while others should only be reachable internally.

# Create two networks

docker network create frontend-network

docker network create backend-network

# Web container connects to both

docker run -d \

--name web \

--network frontend-network \

-p 8080:80 \

nginx

docker network connect backend-network web

# Database only on the backend network (not reachable from outside)

docker run -d \

--name db \

--network backend-network \

postgresNow web can reach db through backend-network, and external users can reach web through the published port on frontend-network. But db has no published ports and isn't on frontend-network, so external traffic and frontend-only containers can't reach it directly.

This pattern is a basic form of network segmentation. It mirrors the classic DMZ architecture where a public-facing tier sits between the internet and a private backend tier.

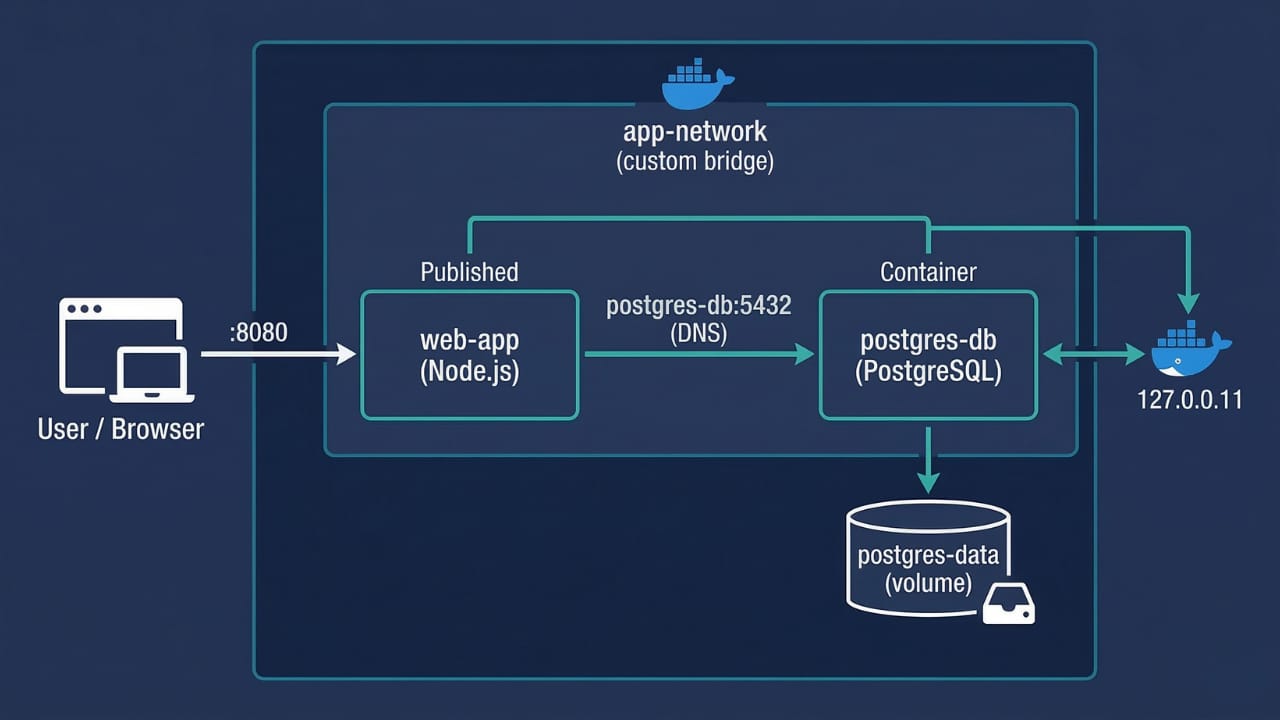

Practical Example: Web App and Database

Here's a complete setup for a Node.js web application connecting to a PostgreSQL database, using a custom bridge network so containers can find each other by name.

# Step 1: Create a custom network

docker network create app-network

# Step 2: Start the database container

docker run -d \

--name postgres-db \

--network app-network \

-e POSTGRES_USER=appuser \

-e POSTGRES_PASSWORD=secret \

-e POSTGRES_DB=myapp \

--mount type=volume,source=postgres-data,target=/var/lib/postgresql/data \

postgres:16-alpine

# Step 3: Start the web application container

docker run -d \

--name web-app \

--network app-network \

-p 8080:3000 \

-e DATABASE_URL=postgresql://appuser:secret@postgres-db:5432/myapp \

my-web-app:1.0

# Step 4: Verify both containers are on the network

docker network inspect app-network

# Step 5: Test connectivity from web-app to postgres-db

docker exec web-app ping -c 2 postgres-db

# PING postgres-db (172.18.0.2): 56 data bytes

# 64 bytes from 172.18.0.2: ...Notice the DATABASE_URL in step 3: it uses postgres-db as the hostname. The web application's code doesn't need to know any IP addresses. Docker's embedded DNS on app-network resolves postgres-db to whatever IP the database container currently has.

Also notice the volume on the database container. Combining a custom network for connectivity with a named volume for persistence is the standard pattern for any stateful service in Docker.

Key Takeaways

Every container gets its own network namespace with an isolated network stack. Docker connects containers to networks by creating virtual Ethernet pairs linked to a virtual bridge

Docker ships with five built-in network drivers: bridge (default), host, overlay, macvlan/ipvlan, and none. Bridge is the right choice for single-host container communication

The default

bridgenetwork connects containers automatically but provides no DNS resolution. Containers can only communicate using IP addresses, which are dynamic and fragileNever use the default bridge network for multi-container applications. Create a custom bridge network instead

Custom bridge networks provide automatic DNS resolution using Docker's embedded DNS server at

127.0.0.11. Containers reach each other by name, not by IPContainer name aliases can be added with

--network-alias, allowing a container to be addressable under multiple hostnames on the same networkHost network mode removes all isolation and shares the host's network stack directly with the container. It only works as expected on Linux. It's not the right choice unless you have a specific performance requirement

None network disables all networking except loopback. Use it for containers that genuinely have no legitimate network requirements

Overlay networks span multiple Docker hosts using Swarm mode. They're the foundation of Docker Swarm's service-to-service communication

Port publishing (

-p host:container) is required only for external access. Container-to-container traffic within a custom network never needs published portsContainers can join multiple networks simultaneously, enabling network segmentation where a public-facing container can reach both external traffic and internal backend services

docker network inspectis the most useful debugging command. It shows every connected container, its IP, the subnet, and driver details

Conclusion

Docker networking is what transforms a collection of isolated containers into a working application. The key insight is that the default bridge network's lack of DNS is not an oversight but a deliberate design choice, and the solution is always a custom bridge network for any multi-container work.

The pattern you'll use most often for single-host applications is: one custom network per application, all containers for that application connected to it, database containers with no published ports (only internal access), and web or API containers with published ports for external access. That combination gives you container-level DNS, isolation between applications, and controlled external exposure.

For anything that needs to span multiple hosts, Docker Swarm with overlay networks is the next step, and Docker Compose (covered next in this series) makes defining networks declaratively alongside container definitions straightforward, so you never have to wire up networks manually from the command line again.

🔗Let’s Stay Connected

📱 Join Our WhatsApp Community

Get early access to AI/ML, Cloud & Devops resources, behind-the-scenes updates, and connect with like-minded learners.

➡️ Join the WhatsApp Group

✅ Follow Me for Daily Tech Insights

➡️ LinkedIN

➡️ YouTube

➡️ X (Twitter)

➡️ Website